Tech:Net workshop – veien mot TG23!

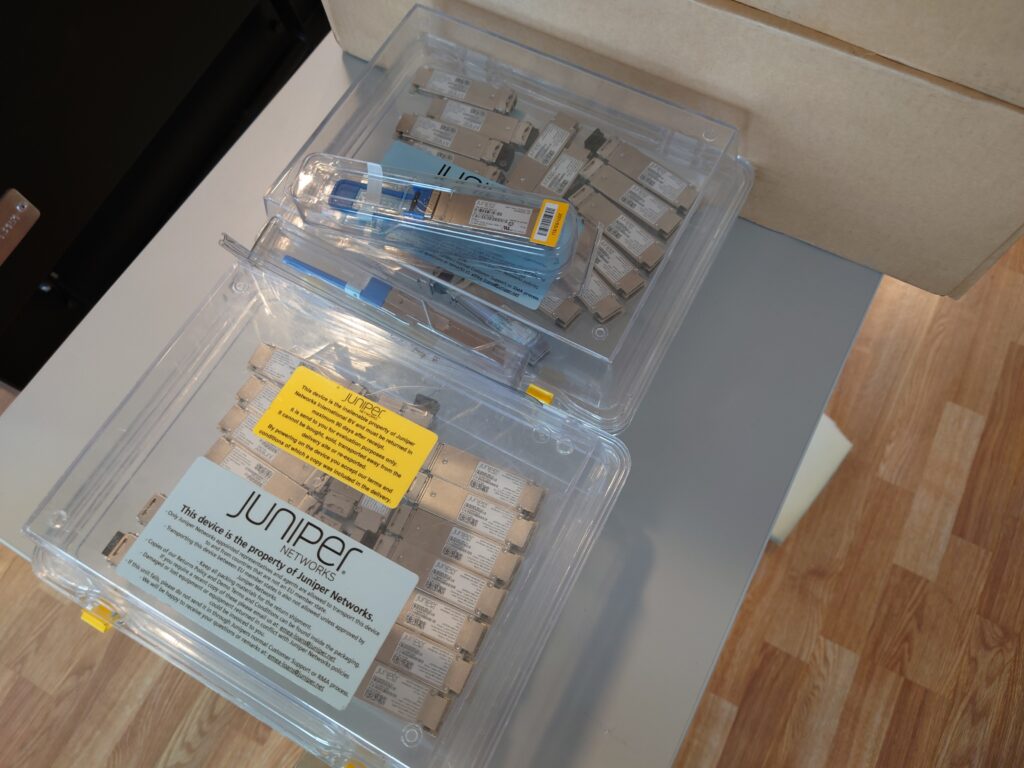

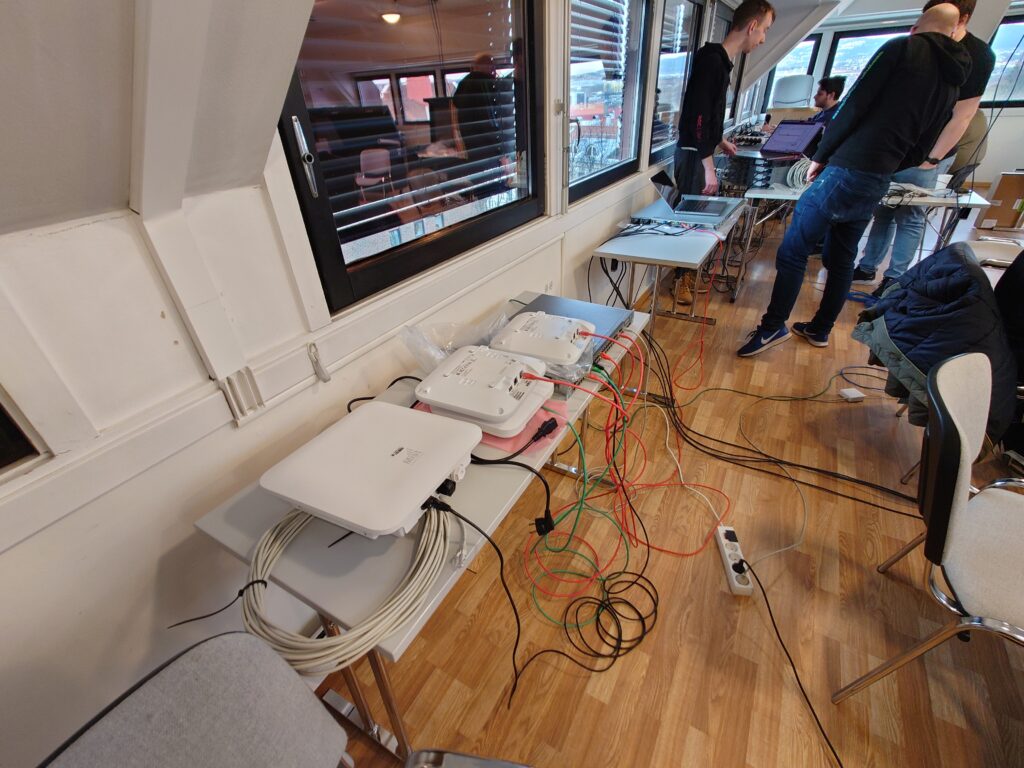

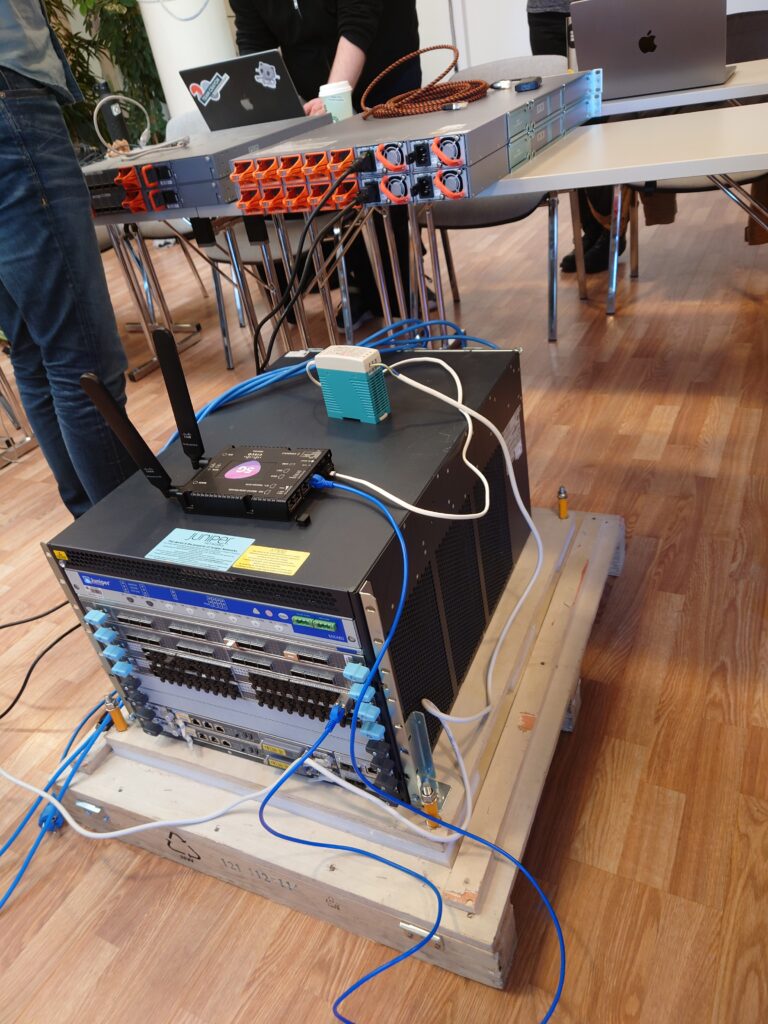

Forrige helg hadde Tech-crewet årets store samling, Tech:Gathering, der det var mye møteaktivitet, sosialisering og ymse. Denne helgen, har Tech:Net samlet seg for en ren arbeidshelg. Utstyret fra Juniper har kommet, den første serveren fra Nextron er i hus og vi begynner å konfe utstyr og teste at det vi vil ha fungerer!

Av ting vi har gjort i helga:

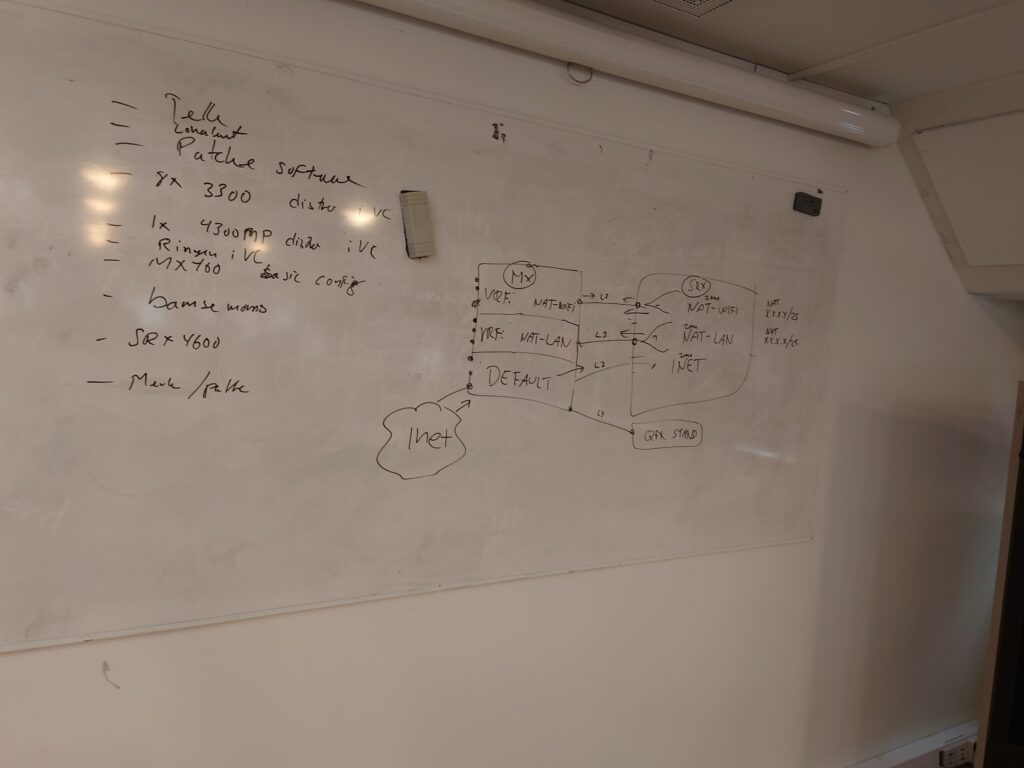

- Sette opp core-ruteren

- Softwareoppgradere distro-svitsjer, sette basisconfig på dem, teste

- Forsøke og feile å få opp Mist Edge i en VM (…)

- Confe brannvegger

- Gjøre klar ringen til bruk

- Teste Multirate

- Knote med “nytt” southcam

- Teste utrullingsautomatikk

Også har vi tatt sikringen på frivillighetshuset på grunn av overbelastning da.

To ganger.

Men uansett sikringer eller ei, vi er straks klar for “pre-opprigget” – en tur opp til vikingskipet for å sette opp utstyr et par uker før det ordinære opprigget til tg starter.

Vi gleder oss til TG nå!