Oppdatering fra Tech om The Gathering 2019 designet

The Gathering 2019 rykker stadig nærmere, og det er på tide at Tech:Net skriver kort om årets nettverks design og hvilket utstyr vi skal bruke. Det blir ikke veldig spennende for dere som leste om fjoråret, da vi utstyrsmessig, stort sett kommer til å gjøre nøyaktig det samme i år som i fjor, men les gjerne igjennom for å friske opp minnene dine!

TG19 i korte trekk:

– Det blir Juniper switcher og rutere i kjerne og til deltagere.

– Det blir Fortinet brannmur og wifi.

– Det blir Telenor for Internett.

– Det blir Nextron for servere.

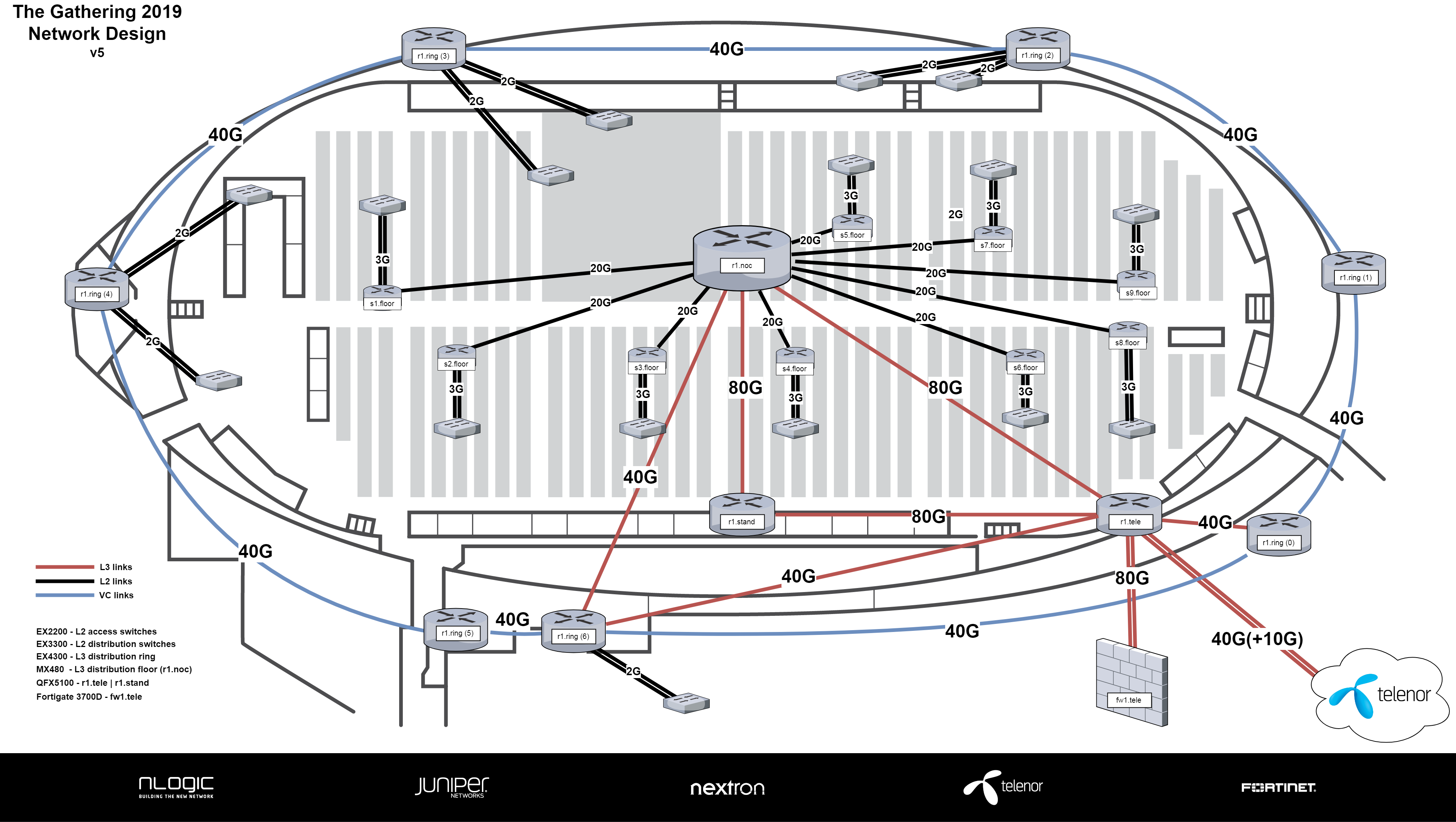

Kjernenett

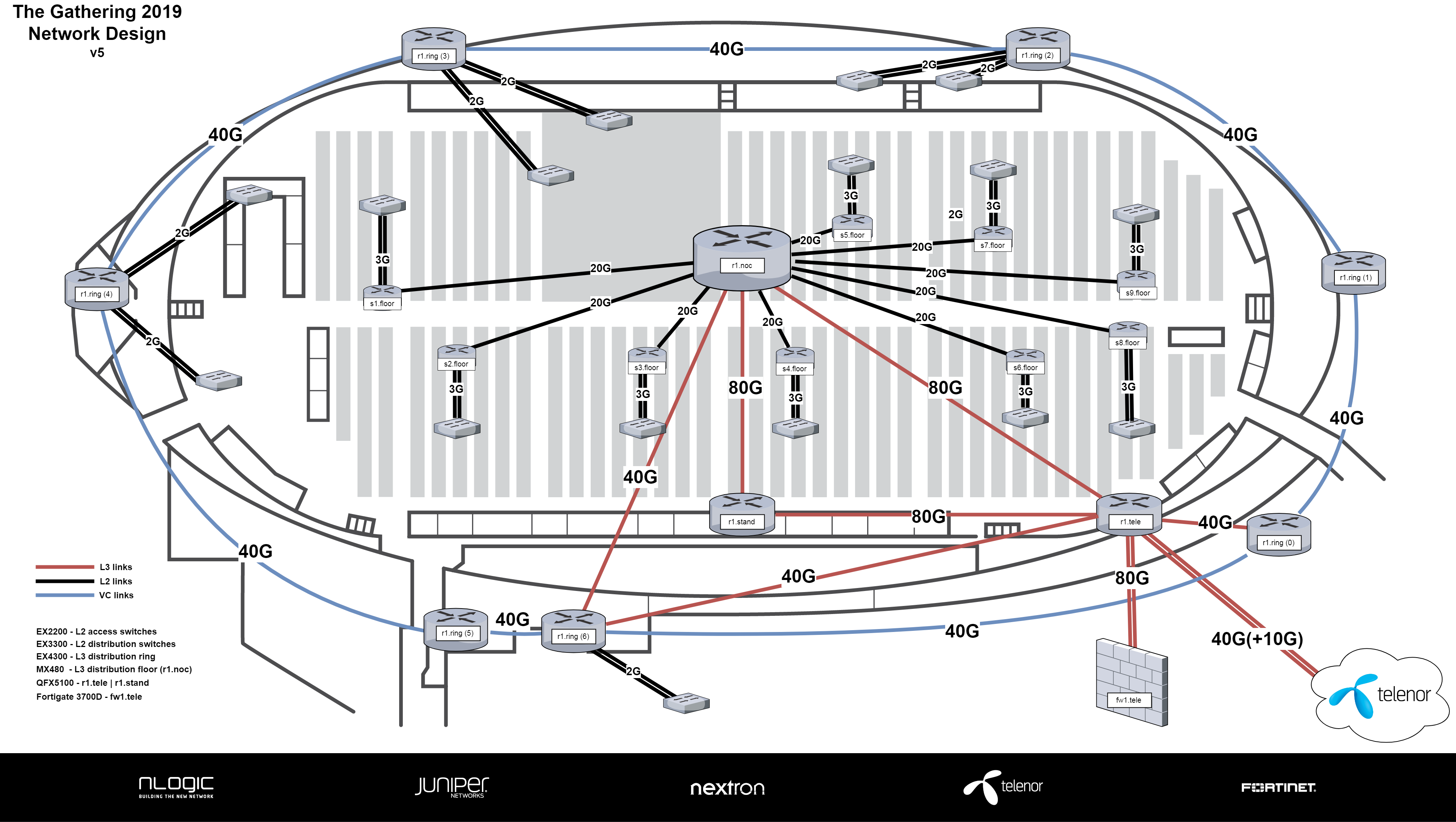

Helt siden TG15 har kjernenettet (backbonet) vært basert på 2x 40 Gbit Ethernet. Utstyr, og plasseringen av dette har vært litt ulik fra år til år, men i år blir det likt som i fjor.

Kjerneringen vår blir bestående av tre rutere:

– r1.tele (2x QFX5100 i VC): Grenseruter mot Telenor og aksess for infrastruktur servere. Står plassert i telematikkrommet i Vikingskipet hvor fiber kommer inn.

– r1.stand (4x QFX5100 i VC): Ruter for game-, event- og infrastruktur -servere samt wireless kontroller. Står (på utstilling) i Tech:Net sin stand i sponsorgata.

– r1.noc (1x MX480): Ruter for alle deltagere. Står plassert ved glassburet hvor NOC sitter.

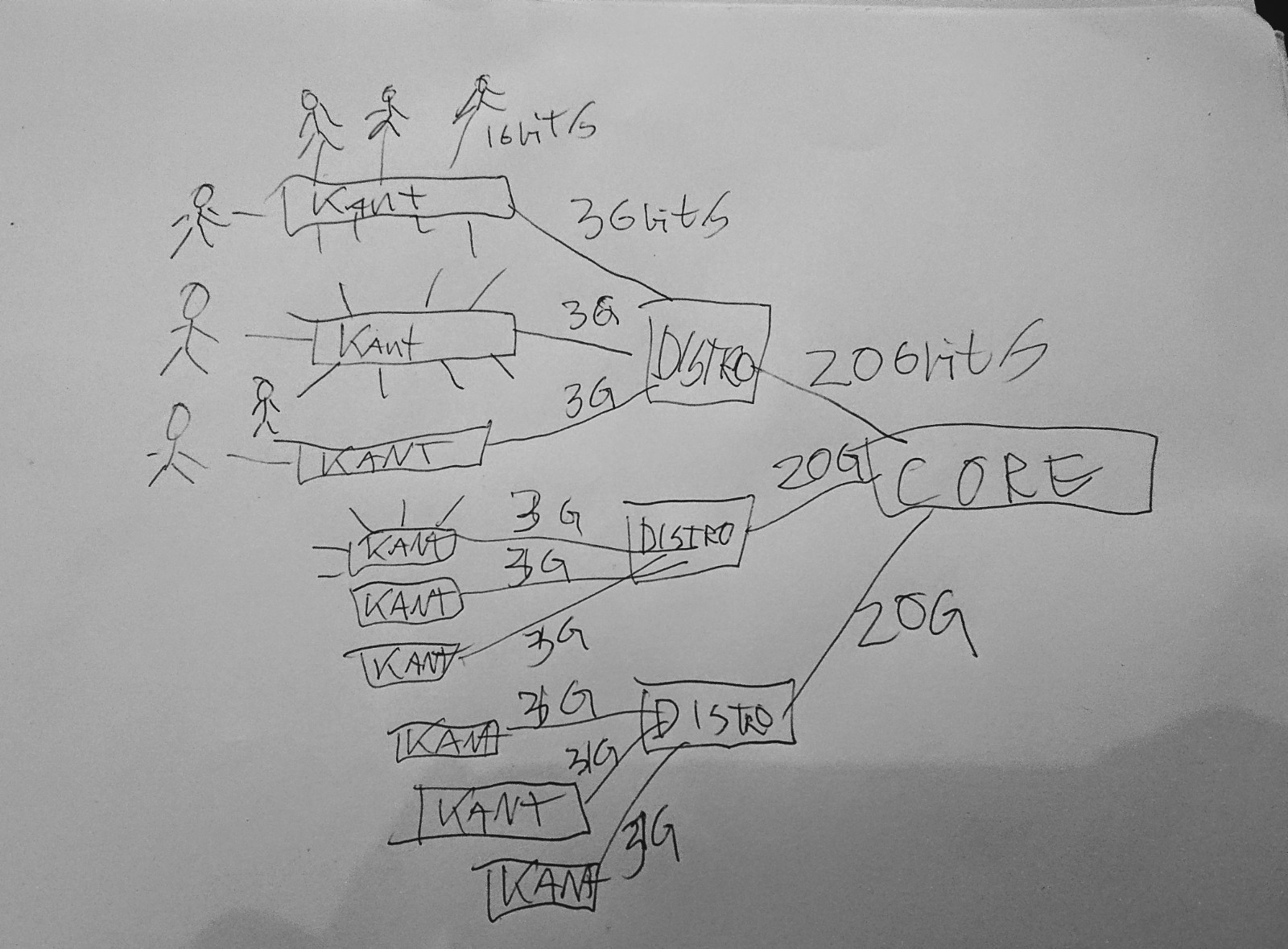

Core og distribusjon til deltagere

I år, som i fjor blir det en sammenfallet kjerne (collapsed core) bestående av en Juniper MX480 og 9 distribusjonsswitcher med 3x Juniper EX3300 satt opp i vritual-chassis. MX480en blir plasser ved NOCen, og fra den har vi 2x 10 Gbit Ethernet på hvert sitt fiberpar ned til alle distro switchene på gulvet.

MX480en har vlanene for samtlige bordswitcher og gjør videresending av DHCP pakkene til DHCP serverene våre.

Vi har valgt å gå for denne modellen da vi over tid har lært at Juniper sine EX3300 switcher ikke er kraftige nok til å håndtere lag3 og DHCP videresending med de kravene vi stiller. Det har vært fler tilfeller i tidligere år hvor vi har opplevd problemer med disse switchene. MX480en har mye kraftigere hardware, og takler nettverk som er mye større enn hva vi produserer på TG. Vi kunne klart oss med lillebroren MX240, men disse har Juniper lite av på demolager, så er lettere å få tak i MX480er.

Aksess

Som tidligere år vil aksess til deltagere (og crew) gjøres via Juniper EX2200 switcher med 48 porter. På alle deltakerbordene blir disse levert med 3x 1 Gbit Ethernet. På disse switchene kjører diverse first-hop-secuirty policier slik at vi kan forhindre angrep på infrastrukturen vår, og forhindre nedetid for andre deltagere ved feil som oppstår grunnet feilkofingurasjon hos brukerene. Eksempelvis så forhindrer vi at deltagere kan kjøre egen DHCP server og skape uregelmessigheter i nettverket.

Leveranser til crew og misc støttefunksjoner under TG

Tradisjonelt har dette blitt gjennomført ved å utplassere enkeltstående rutere rundt om kring i kriker og kroker i Vikingskipet som kjørte OSFP ruting. Nytt av TG17 var at alle disse ruterne ble en logisk enhet ved bruk av en teknologi kalt Virutal Chassis. Da oppfører alle boksene seg som om de er en, men har mange linjekort. Det gjør at vi kun trenger å forholde oss til konfigurasjon på et sted.

Nytt av årets TG blir at vi utvider denne logiske boksen med et ekstra “linjekort” da vi på TG i fjor opplevde å gå tom for porter på det linjekortet som står plassert ved NOCen.

Denne ringen vil i år bestå av 2x EX4600 (24p), 4x EX4300 (24p) og det nye ekstra linjekoret EX4300 (48p). De to EX4600ene blir satt opp som master og backup routing engine da har litt kraftigere CPUer enn EX4300ene og følgelig håndterer bedre hendelser, og trafikk som trenger prosseseringskraft. Eksempelvis videresending av DHCP pakker, eller håndtering av brudd på forbindelser mellom switchene.

Denne boksen (ringen) blir satt opp med 1x 40 Gbit Ethernet mellom hvert linjekort.

På linjekortene i ringen kobler vi opp tilsvarende distrubisjonsswitcher som brukes på gulvet (EX3300). Disse fungerer som distribusjonswitcher til støttecrewene som kobler seg til i EX2200er som plasseres i nærheten av crewene.

Vi mottok utstyret vi låner fra Juniper forrige uke, og helgen som var møttes en gjeng fra Tech:Net i Oslo for å pakke ut. Noen nøkkelenheter ble oppgradert til Junos 18.1 (mer om hvorfor kommer nok i en egen post senere!), og fikk basic config.

EXene og QFXene vi låner kom i denne fine kassen:

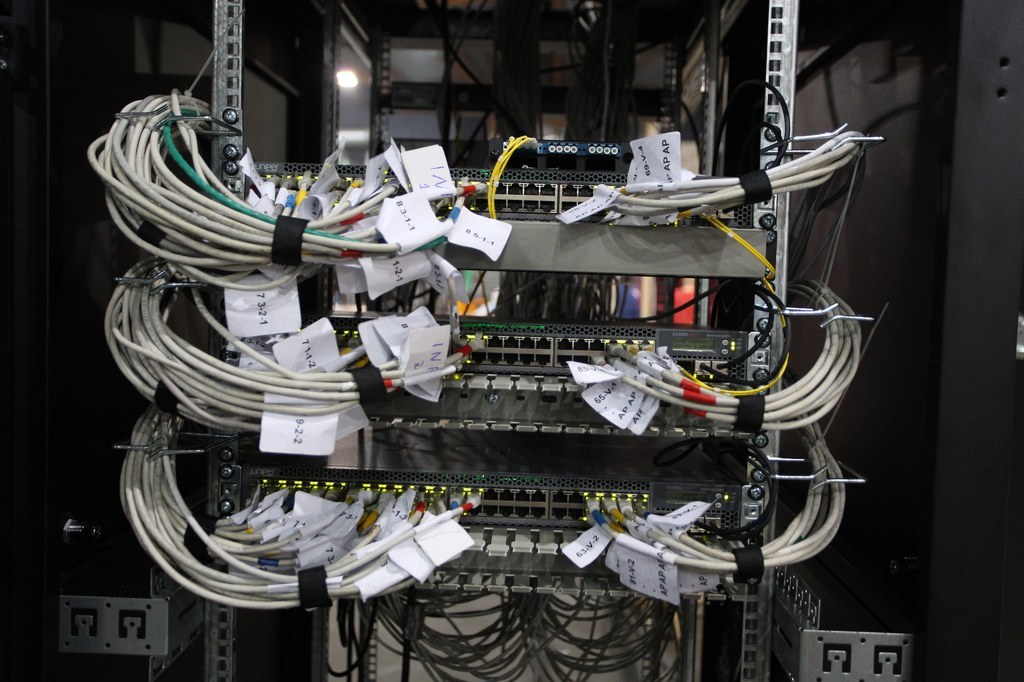

Og her har vi ringen, klargjort med basic config og stående i VC. Klar for å spres ut i skipet. Hvis noen lurer, EX4300ene til høyre i bildet har sine 40 Gbit Ethernet porter i baken!

For å kunne spre boksen r1.ring utover hele vikingskipet er vi avhenig av god fiberinfrastrutkur i skipet. Det ble lagt ned en enorm insatts i dette for noen år siden, og hvis du ønsker å lese om det og se bilder kan du se her:

Over en årrekke har Wannabe, “crew-systemet” til The Gathering, utviklet seg til å bli et ganske sindig system for å ta i mot søknader til crew, generere dataunderlag til ID-kort, holde oversikt over hvem som skal rydde når, matallergier, og en hel skog av andre funksjoner som brukes for å avvikle The Gathering hvert eneste år.

Og i nesten like mange år har folk ønsket å bruke Wannabe til andre arrangementer enn TG. Dette er noe vi har lyttet til og i en lengre tid har det vært planlagt å slippe Wannabe som Open Source slik at du selv kan ta det i bruk – og bidra tilbake.

Hovedgrunnen til at det ikke har skjedd er den vanlige: Det krever at vi går litt over koden og sjekker at det ikke er noe åpenbart idiotisk der på sikkerhetsfronten, og så må vi faktisk gjøre litt praktisk planlegging av hvordan ting skal organiseres. Ikke mye, men litt.

I hvert fall siden jeg arvet “hovedansvaret” for wannabe i slutten av 2016, og Core:Systems ble “Rebootet”, har det ligget i kortene at Wannabe sin kildekode skulle slippes.

Så hvor er den?

Vel, teknisk sett ligger den på github, men fordi det er TG om et par uker har vi bestemt oss for at den rette tiden å slippe koden ikke er akkurat nå, siden eventuelle “katastrofalt dumme bugs” vil få så store konsekvenser. Derfor annonserer vi heller at koden vil bli sluppet offentlig litt etter TG, og den datoen er satt og godkjent som: 1. Juni 2019.

I samme slengen vil vi også legge ved koden som ligger bak gathering.org, da det ikke er noen grunn til at dette skal være lukket.

Så nå har dere noe å se fram til!

Om dere har noen spørsmål, ta gjerne kontakt på discord! (å finne discord’en vår er overlatt til leseren som en ferdighetsprøve).

Om du fulgte med veldig nøye på ettermiddagen søndag 3. mars så la du kanskje merke til at www.gathering.org var utilgjengelig i en liten stund. Perioden med nedetid var varslet i god tid i forveien (ca 30 minutter) slik at alle i crewet visste hva som foregikk (her kommer altså forklaringen så alle i crewet vet hva vi gjorde).

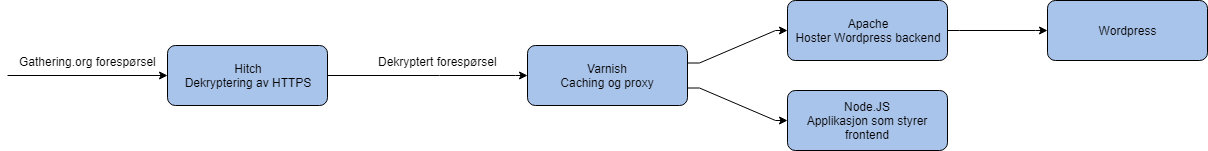

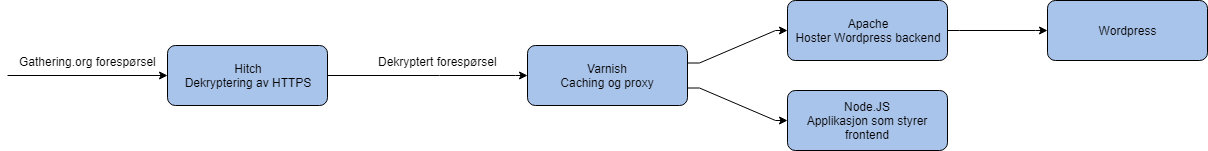

Historien begynner med at noen små og store nerder satt samlet på Frivillighetshuset i Kolstadgata 1 i Oslo. KANDU:Systemstøtte hadde hatt møte dagen før og vi hadde blitt enige om at vi måtte gjøre noe med hvordan www.gathering.org fungerte. Før vi begynte bestod nettsiden av en server med en salig blanding av Apache, Varnish, WordPress, og vår egenutviklede Node.JS baserte web API. Konfigurasjonen var så komplisert at var lett å gjøre feil som kunne være vanskelige å nøste opp i. Vi bestemte oss derfor å bygge alt opp på nytt igjen. Dette er forklaringen på hva vi endte opp med, og en intro til hvordan det fungerer.

HTTP og HTTPS

Når du åpner en nettside med alle moderne nettlesere så benytter du en protokoll som heter Hypertext Transfer Protocol (eller bare HTTP). Protokollen håndterer å hente og sende informasjon til nettsider på en standardisert måte. Du har sikkert lagt merke til at foran de fleste linker så står det noe lignende http://example.com. Dette er en instruks for nettleseren om å prøve å nå example.com over http protokollen.

Det eneste problemet med protokollen er at alt du sender over den er fullt leselig for alle som lytter. Noen glupe hoder fant ut at om du legger til en S på slutten av HTTP så får du HTTPS, og “S”en står for “Secure”. Når nettleseren prøver å nå serveren så blir de enige om en felles krypteringsnøkkel slik at de kan kommunisere med hverandre uten at andre kan se hva som blir sendt. Metoden heter Diffie-Hellman key exchange (DH) og du kan lese mer om den her.

Før vi bygget om stacken vår så var det Apache som hadde ansvaret for å ta imot HTTPS tilkoplinger, men det var lett å lage redirect looper mellom Apache og Varnish (hvor nettleseren din blir sendt frem og tilbake mellom Apache og Varnish i all evighet) så vi valgte en annen metode. Vi installerte Hitch på serveren og konfigurerte den til å bruke et SSL/TLS sertifikat fra Let’s Encrypt. Hitch gjør ingenting annet enn å ta imot tilkoblingen, dekryptere den og sende forespørselen videre til neste ledd i prosessen.

Caching og reverse proxy

Nå som Hitch tar imot HTTPS så trenger vi å route forespørslene til riktig sted. Til det installerer vi Varnish. Varnish er egentlig en webcache, men programvaren har mange kraftige funksjoner som gjør det praktisk å bruke den som en proxy også.

Siden www.gathering.org består av to forskjellige biter i bakgrunnen trenger vi å proxye (videresende) alle forespørsler for https://www.gathering.org/api til WordPress, mens resten går til en Node.JS basert applikasjon på https://www.gathering.org/tg19. Fordelen med en reverse proxy her er at du som bruker av nettsiden bare trenger å forholde deg til en webadresse, mens webserveren som tar deg imot kan benytte mange tjenester i bakgrunnen uten at du legger merke til det.

Men hva er en cache? En cache er et mellomlager mellom deg og nettsiden som gjør responsen fra nettsiden raskere. Når du kobler til en webside så må webserveren generere en versjon av nettsiden for deg. Dette kan være en tung oppgave og kan ofte være treg. Det cachen gjør er å ta vare på en kopi av nettsiden, så når neste person spør om samme nettside så svarer Varnish bare med den mellomlagrede kopien istedenfor å la webserveren generere en helt ny en. Dette fungerer bare en liten stund, for etterhvert så endrer jo innholdet på nettsiden seg når det blir publisert nye artikler og slikt, så Varnish kan bare mellomlagre sider i maksimalt noen minutter. Kanskje bare så lite som noen sekunder hvis innholdet endrer seg fort. I www.gathering.org sitt tilfelle så lagrer vi dataen ganske lenge for vi har satt det opp slik at dersom noe innhold endrer seg så sendes det en beskjed til Varnish om å slette den mellomlagrede kopien.

Hva skjer etterpå?

Nå som forespørselen er kommet gjennom Hitch og Varnish så ender den enten opp direkte i vår Node.JS applikasjon eller så treffer den Apache som leverer WordPress siden. Totalt sett så ser arkitekturen vår slik ut.

Hvordan vi bruker WordPress og vår egenlagde frontend kommer vi nok tilbake til i en senere bloggpost (og kanskje kan du få tatt en titt på koden?).

Så hva er egentlig greia med fiber? Og hvordan funker det når vi drar tre nettverks-kabler fra en bordsvitsj (eller kantsvitsj) til distro-svitsjene som står i midtgangen? Jeg tenkte jeg kunne skrive litt om det! Selv om jeg eeeeegentlig ikke er den beste kandidaten, da jeg strengt tatt ikke jobber med dette til daglig og for det meste er eksponert til det på TG.

En liten rask påminnelse: Når jeg skriver om sånne nettverkskabler du plugger i PC’en så skriver stort sett TP-kabler – det står for “twisted pair”. Hver “leder” kommer i par – og de er tvunnet, skjær opp en gammel en og du vil se det med en gang.

Aggregater

Men uansett, la meg begynne med et aggregat!

Et aggregat, eller en port channel, eller en bundle, eller et bond, eller en av de mange andre navnene på samme ting er en måte å kombinere to eller flere fysiske kabler så de kan behandles som en på det logiske nivået. Dette gjøres vanligvis av to grunner: for å gjøre ting mer robust – når “naboen” din på TG drar ut kabelen som kobler hele svitsjen på internett nå så er det to til igjen – og for å øke båndbredden – 3Gbit/s i stedet for 1Gbit/s. Dette gjøres på et såpass lavt nivå i stacken at ting som ruting av IP-pakker ikke kommer inn i bildet.

Aggregater kan settes opp på vanlig TP-kabler, fiberkabler og egentlig det meste, litt avhengig av utstyret, så lenge begge sider er enige.

Det finnes grovt sett to måter å operere det på: Enten at alle medlemmer (kabler) i aggregatet er aktive samtidig, eller at en er aktiv og en annen er passiv, men tas i bruk ved feil.

Hvordan trafikken balanseres over flere kabler når alle medlemmer(kabler) er aktive varierer fra implementasjon til implementasjon. I dag finnes også standarden 802.3ad (og 802.3ax), som gjør ting lettere. Dette kan man sette opp manuelt – da er det åpenbart kritisk viktig at du setter det opp likt på begge sider. Et mye bedre alternativ er å bruke LACP – Link Aggregation Control Protocol. Med LACP må du fortsatt sette opp alt på begge sider, men failover skjer av seg selv og du er sikker på at begge sider ser ting likt.

Et eksempel på hva som skjer uten LACP hadde vi på… enten var det TG15 eller TG17. Vi satt i NOC’en og fikk et oppkall på radio fra et annet crew som trengte bistand… fordi de nådde bare halve internett! Bokstavelig talt. De nådde annenhver IP. Etter mye latter og undring sendte vi en for å se på det, og kunne bekrefte de heller utrolige symptomene. Dette skyldtes at de brukte en (eldre) svitsj som hadde gammel konfig på seg med statisk lastbalansering av et link aggregat. Med LACP ville ikke dette skjedd.

Men uansett, link aggregater og LACP er helt sentralt for både TG og internett som sådan. Vi bruker det på så og si alle linker vi har, inkludert mot internett – vi har 5 par med 10Gbit/s fiber mot Telenor, i ett aggregat.

Fiber

Så fiber da? Hva ER egentlig greia?

Vel, la oss begynne med TP. Hva er greia der?

TP er lagd for LAN. TP har en maks-lengde på 100 meter (stort sett kan du komme unna med mer enn 100 meter, men speccen sier nå maks 100 meter), og skal du ha raskere hastigheter må du ha ny kabel.

Nå ligger det litt i kortene, men fiber har helt annerledes egenskaper. For det første har fiber maks-lengder på et sted mellom 300 meter og 50 000 meter, avhengig av teknologi i bruk. Og vel så viktig: Fiber som ble lagt for evigheter siden kan fortsatt “oppgraderes” ved at du bare bytter ut utstyret på endene. For et LAN er ikke dette viktig: Det å trekke en ny kabel fra midten av vikingskipet til bordene er ikke vanskelig. Men om du har en 10km lang fiberkabel i en grøft så er det veldig fint å vite at du kan oppgradere hastighetene uten å bytte kabelen.

Fiberkabler brukes også på forskjellige måter, men den simpleste måten er å jobbe med dedikerte fiber-par. Så mellom to nettverkselementer kobles det to fiberkabler i et par: En for å sende data og en for å motta. Man snakker derfor ofte om “par” som om man mener kabel. Ikke helt ulik at en TP-kabel består av flere kobbertråder.

Men vi må snakke litt mer om dette med 300 meter versus 50km. Det er hovedsaklig to typer fiber man snakker om: multimode og singlemode. Dette kommer fra tykkelsen på “kjernene” i fiberen om jeg ikke tar helt feil, men det er i praksis ikke noe du tenker på. Den korte versjonen er uansett at du vil ha single mode.

For ordens skyld: Singlemode er typisk gul, mens multimode gjerne er hva som helst annet enn gul.

Multimode funker innad i bygninger, inne i et datasenter, osv. Typisk avstandsbegrensinger er alt fra 100 til 500 meter. Selv i vikingskipet er det ikke stort. Hovedgrunnen til at du finner mye multimode? Det er bittelitt billigere…. I dag er ikke forskjellen veldig stor.

Men en grunn til at multimode skaper problemer er at for både TP og fiber er det helt vanlig at man skjøter kablene. Dette gjøres i stor stil, både på TG, f.eks. drar vi fiberkabler fra distroene på gulvet og opp til taket, der skjøter vi dem inn på en permanent bunt fiberkabler som går ned til NOC’en. Men det skjer også på internett som sådan: Når det legges fiberkabler legges det så og si aldri en fiberkabel. Man trekker en samlingskabel – alt fra 4 par til over hundre. La oss si at man begynner med 96 par. Så i neste punkt skal kanskje dette deles – det skal kobles til flere forskjellige kunder, hver et godt stykke unna. Så man trekker to nye 96-pars kabler i “hver sin retning” – man har lagd seg en Y.

Neste ledd igjen kan det kanskje deles 3 veier, da gir det kanskje mer mening å bruke 24 par? Eller inn til kunden trekker man kanskje 8 par, selv om kunden foreløpig bare har planer om å bruke to.

Resultatet er at det er masse kabler i grøfter med “ubrukte” par. Men det er ikke koblet på noe i hver ende. Dette fordi det er dyrt å grave, så man vil helst slippe å gjøre det på nytt fordi man sparte på fiber.

Det meste av dette er da singlemode. Og du kan ikke koble singlemode og multimode sammen – da må du ha “aktivt” utstyr i mellom.

Littegrann WDM?

På lengre strekk må man også snakke om WDM “wave division multiplexing” – der man sender flere signaler på samme kabel, med forskjellige “farger”. Fiber, som du sikkert vet, bruker lys i stedet for elektriske signaler. Lys overføres med en bølgelengde – som også er det samme som farge. Ved å multiplexe lys med forskjellige farger kan man sende forskjellige, ellers uavhengige, signaler på samme fysiske fiberkabel. Dette krever en del kostbart utstyr, men er slik internett sin ryggrad er bygd opp.

Som en liten sidenote: Når folk snakker om “sort fiber” så mener man et fibersignal uten WDM som går fra A til B. Det er for eksempel sort fiber mellom vikingskipet og Telenor-ruteren som TG får internett fra. Merk at det ikke trenger å være bare EN kabel. Mellom TG og Telenor f.eks. er det flere skjøtepunkter i bakken.

Men vi bruker ikke noe WDM for TG. Så la oss holde det utenfor. (Vent…. for sent!)

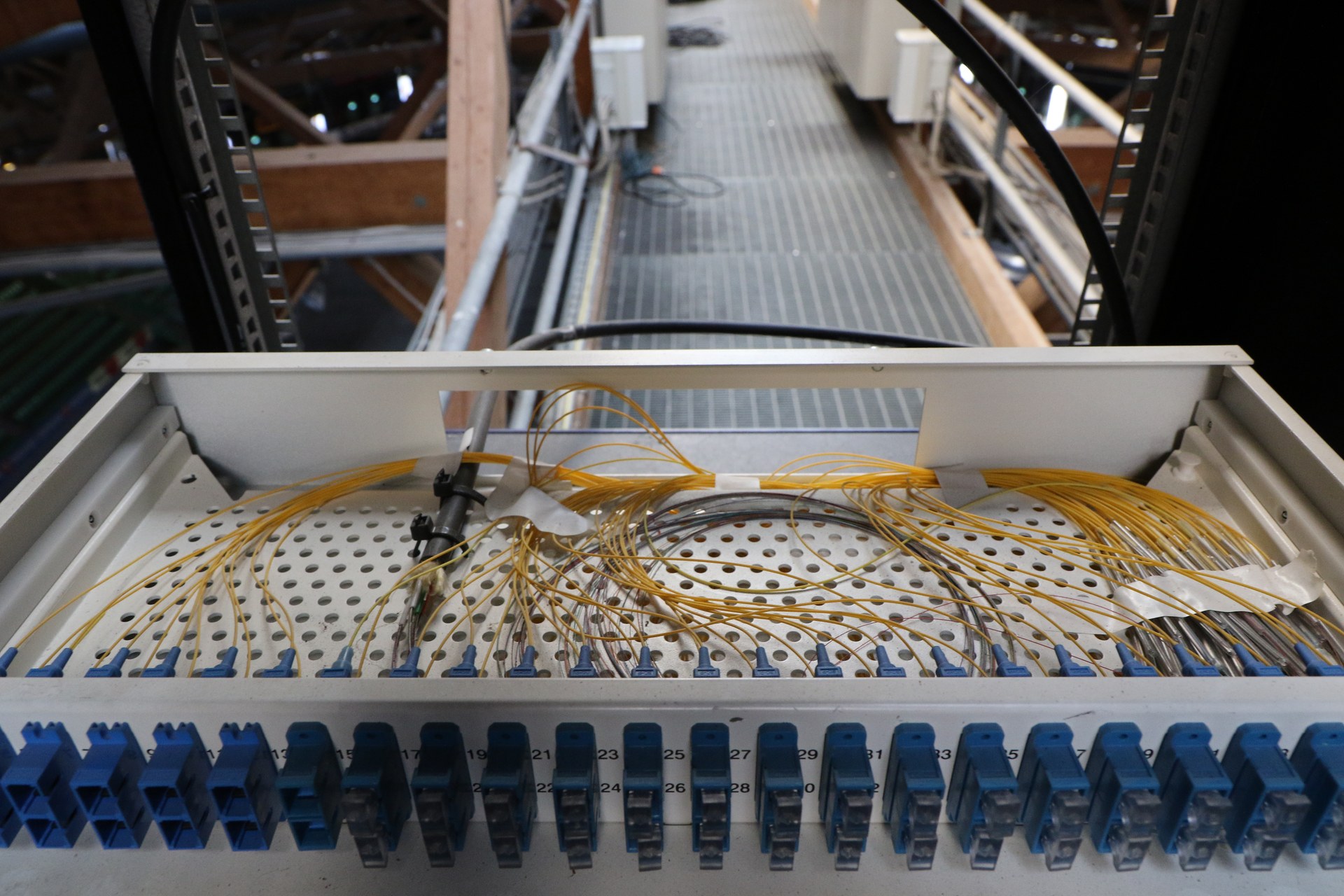

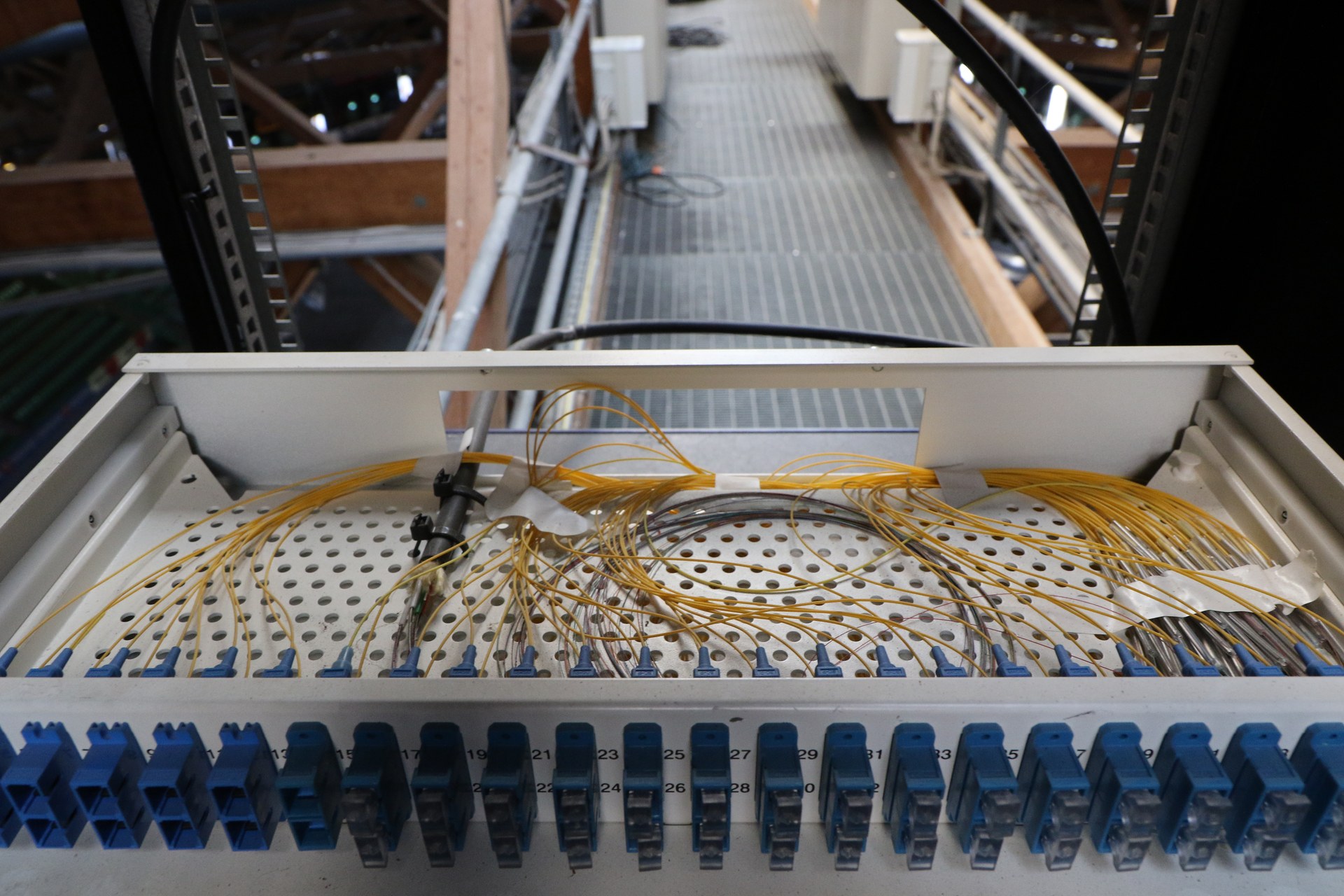

Vi bruker derimot singlemode. Vi har både permanent installert fiber som ligger der hele året, og en hel masse “patch” på alt fra 1 meter til 50 meter. Patchekabler er “løse” kabler som er ferdigterminert (har plugger) og kan beskrive alt fra kabelen du bruker mellom pc’en din og hjemmeruteren din, eller fiberkabler vi bruker mellom våre rutere. For å gjøre det lettere å koble ting har man “patchepaneler”, dette er paneler med masse kontakter (hun-siden) som lar deg koble patchekabler på mer permanent installerte kabler.

I ringen vår (se forrige post fra meg!) så har vi 6 par i en G12-kabel (12 fiber, 6 par). Dette har vi terminert “fint” i patchepaneler så det er lett å koble på annet utstyr. Dette betyr at for å faktisk bruke det må vi ha minimum 3 fiberkabler i bruk: 1 patchekabel fra nettverksutstyret til punktet i patchepanelet – deretter G12-kabelen som går mellom 2 patchepaneler – og enda en patchekabel og nettverksutstyr igjen. Opp til taket og mellom Tele og NOC er det en G48 som ligger – det er da 24 par.

Plugger?

Men hva med pluggene?

Det jeg har jobbet mest med på TG er LC og SC – to ganske like plugger, et triks for å huske forskjellen LC er liten og SC er stor. Inn i utstyret er det stort sett LC.

Dette er bare plugger – om man vet hva man gjør kan man kappe en fiberkabel og bytte om plugger.

For de litt større kablene bruker vi MPO – her herjer det litt forvirring, men MPO er egentlig selve pluggen/kontakten som gjør det enkelt å koble f.eks. en 6-pars kabel inn i en “kasett” som splitter det ut til 2*6 LC-plugger. For å være ærlig så husker jeg ikke helt hvor vi bruker SC (de litt større pluggene), men selve utstyret bruker LC. Og begge pluggene er ganske like og typisk blå.

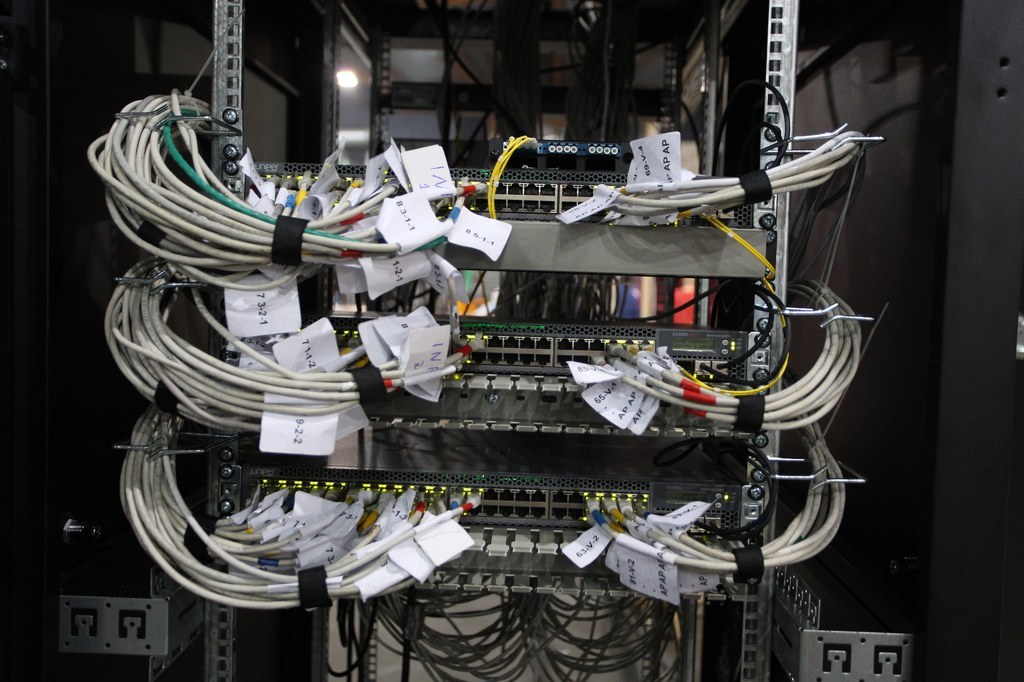

Her ser du en typisk distro. Det grå er vanlig TP patchekabler, den lille boksen på toppen med 4 gule kabler i seg er en MPO-kassett, de fire gule kablene er to par med single-mode fiber som går ned i hver sin EX3300. Mellom EX3300’ene kan du se noen sorte DAC-kabler på høyre side i bildet.

Transceivere

Så har vi kommet til hjertet: optikk! Eller, nesten da.

Med TP er det bare RJ45 tut og kjør, men for å kunne støtte både multimode, singlemode, wdm, DAC-kabler (Dette har jeg ikke nevnt en gang! DAC-kabler er typisk kobber, lagd for korte avstander, typisk fra en server til nærmeste switch) med mer, så fant man for lenge siden ut at det er lurt at man lager et generelt grensesnitt som lar deg plugge inn “kabel-spesifikke” moduler. Dette begynte vel formelt med GBIC’er, men i dag snakker vi om varianter av SFP – small form-factor pluggable module.

Tanken er enkel: Du lager en svitsj med f.eks. 4 10Gbit/s-porter, men selve porten er helt ubrukelig alene. Du må kjøpe en “transciever”(transmitter/receiver) for å sette inn i porten. Skal du bruke “sort fiber” kjøper du bare to transcievere som jobber på samme bølgelengde og setter i hver ende. Skal du ha WDM må hver transciever ha hver sin unike farge (og du må ha noe WDM-utstyr også).

Med DAC-kabler har man på en måte juksa: Da har man to transcievere og en integrert kabel i mellom.

Optikk vi bruker kommer hovedsaklig i to-tre varianter: SFP – dette er 1Gbit/s, vi bruker i praksis ikke dette, men trenger det. SFP+ er 10Gbit/s, dette bruker vi plenty av. QSFP+ – quad SFP+ – 40Gbit/s – dette bruker vi noe av også, også har man vel også fått diverse ala QSFP28 som gir 100Gbit/s – men det bruker vi ikke på TG. GBIC er gamle greier som kom før SFP, men en del bruker begrepet fortsatt om ting som ikke egentlig er GBIC – don’t be that guy. Du får også tak i TP-moduler! Så du kan sette inn moduler som gir RJ45 – eller fiber.

En enkel transciever koster alt fra 100kr til 10 000,-, om du handler “fornuftig”. Om du tror på listepriser, derimot, så koster juniper sine 40G-transcievere 7k USD per stykk – noe intet fornuftig menneske betaler.

(Her er original-optikk vi lånte fra Juniper til en listepris av 7k USD per stk…. nei, du betaler ikke 7k USD for dem om du faktisk kjøper dem selv)

Slike slotter er stort sett aldri foroverkompatible. Du kan ikke sette en SFP+ modul inn i en SFP-slot og tenke at “det går bra, jeg får vel bare 1Gbit/s i stedet for 10Gbit/s”. Det går ikke.

På en annen side kan du gjerne ta en QSFP og koble på en “fan-out” modul for å få 4 stk 10Gbit/s i stedet for 1 stk 40Gbit/s.

Og når vi er inne på det…. I tillegg til at hver port krever en modul er det også vanlig at dyrere nettverksutstyr lar deg bytte ut hele grupper med porter – på virkelig store rutere snakker man gjerne om “linjekort”. Du kan da kjøpe et linjekort med masse 10Gbit/s SFP+ for eksempel, eller et linjekort med 8 100Gbit/s porter. Her er det virkelig mulig å fremtidssikre seg: Det kommer gjerne nye linjekort lenge etter en avansert ruter er sluppet. Selv på “mindre” bokser, som de vi bruker som distro på TG, er det små moduler vi kan bytte eller kjøpe til.

Linjekort kommer også i flere forskjellige varianter, hovedsaklig delt i to kategorier: Linjekort som gir deg flere interface/porter, og linjekort som gir deg flere “Features”. Sistnevnte er i grunnen “CPU”en til en ruter. På TG har vi en Juniper MX480-kjerneruter som vi kjører to “routing engines” (RE’er – eller cpu’er), der den ene er backup for den andre. Disse routing engine-kortene er virkelig en fullverdig PC. Intel CPU’er og RAM og i grunnen det du kan forvente, men spesialisert hovedkort. Slike linjekort kan byttes “i fart”, så vi har ganske god sikring her.

Så egentlig er MX480’en “bare” et chassis med et bakpanel som lar linjekortene kommunisere lynraskt. Og 4 strømforsyninger (som vi kobler på 4 forskjellige sikringer).

Her har vi en MX480 med to RE’er og to linjekort med 10Gbit/s-porter til deltakeredistroer og 40GBit/s til resten av nettet.

Hei bloggen!

I dag tenkte jeg å blogge litt om gondul og åpen kildekode, og også litt om hva dette har å si for utviklere/”it-folk”. Om du ikke har hørt om noen av disse tingene før, så frykt ikke — det kommer en innføring!

Som fersk programmerer er det noe man blir opplært i: Copy/paste av kode er dårlig praksis.

Hvis man løser et problem én gang, så er det litt dumt å kopiere koden og lime den inn et annet sted for å løse det igjen.

Tenk om man fant en feil (eller bug, som det heter på fint), da må man huske alle stedene man har kopiert koden til, og oppdatere den. Det er tungvint!

Derfor eksisterer det i programmering funksjoner, som er en liten kodesnutt man kan gjenbruke flere ganger.

Det er akkurat samme konsept som matematiske funksjoner: Dersom du har f(x) = x^2 så vil f(2) = 2^2 = 4.

Her har man gjenbrukt det samme uttrykket ved å kalle det f(x) istedenfor x^2. Det er veldig mye mer gjenbrukbart, dersom man på et senere tidspunkt vil endre funksjonen til å gjøre (x^2)-1 istedenfor.

Poenget mitt med denne lille innføringen er at et viktig prinsipp i programmering er å ha gjenbrukbar kode, altså funksjoner, som … kan gjenbrukes gjennom programmet.

Det har seg ofte slik at man trenger å gjøre de samme tingene i flere forskjellige programmer, eksempelvis så er det mange nettsider som trenger innloggingsfunksjonalitet — skal man lage dette på nytt hver eneste gang?

Det er kanskje ikke akkurat den største jobben, tenker man, men det er ganske viktig å gjøre nettopp innlogging riktig.

Hvis du noen gang har fått en e-post om at du burde bytte passord fordi noen har klart å få tak i dine innloggingsdetaljer så har du mest sannsynlig brukt en nettside som ikke hadde god nok sikkerhet rundt innlogging/brukerinfo.

Det er bedre å gjøre ting riktig én gang og gjenbruke det, enn å gjøre det på nytt hver gang og kanskje glemme ting.

Det finnes et ordtak for dette: Det er ingen vits i å finne opp hjulet på nytt.

Okei, men hva har dette å si for programmering? Og meg? Og gondul?

I programmeringsverdenen kan man ofte “hente inn en pakke” som gjør noe standard for deg. For eksempel håndtere innlogging. Så slipper man å tenke så veldig mye på dette. Denne koden kommer ofte fra prosjekter som er åpen kildekode, som betyr at hvem som helst kan gå inn å lese koden, for eksempel for å forstå hvordan den fungerer.

Så, rett som det er så er det folk som har lyst til å endre på hvordan denne koden fungerer. Og kanskje det er andre som har lyst på den samme nye funksjonen? Der skinner åpen kildekode.

Man kan sende inn en “patch” som er en “endring” av koden. Dette kan være for å fikse en bug eller for å komme med helt nye funksjoner.

Så vil den (eller de) som “eier” koden se over disse endringene og eventuelt legge dem til.

Før så ble dette ofte gjort på e-post (og gjøres fortsatt over e-post noen steder), men nå gjøres dette ofte gjennom et verktøy som håndterer slike patcher, f.eks. “git”, og gjerne med en nettside til, à la GitHub.

Dersom eieren(e) av koden syns dette var en nyttig patch så inkluderer de den gjerne i koden og legger ut en ny versjon av den, så folk kan bruke den nye versjonen som løser dette problemet eller har denne nye featuren. Til slutt så har det seg sånn at folk gjerne tar denne koden i bruk i sine egne programmer, nettopp for å slippe å finne opp hjulet på nytt hele tiden.

Okei, dette ble litt mer om versjonskontroll(systemer) enn man kanskje bryr seg om, så hva har dette å si for meg og gondul?

Først av alt: Hva er gondul?

(Noen får arrestere meg om jeg forteller feil her!)

Gondul er et system for å håndtere (primært) nettverksutstyret som driver The Gathering hvert år.

Etter at man innså at det å konfigurere en og en switch eller gå igjennom alle switchene for å teste at de funker var tidkrevende, så var det noen som tenkte at det må da være mulig å automatisere dette!

Så hva gjør egentlig gondul? Det må gjerne README-fila i gondul fortelle i detalj, men kort fortalt så er det et system som sjekker at blant annet alle deltakerswitchene er i korrekt tilstand.

I tillegg så har det støtte for å sjekke at ting ellers “er riktig”, og også sende ut konfigurasjonen til switchene.

For eksempel så er det veldig lett å se om noen har dratt ut strømmen på en switch, fordi gondul viser dette ved å tegne opp switchen i “værkartet” til gondul som en rød switch, fordi den ikke svarer når vi spør om den er der.

Rød er ikke bra. Grønn er bra. Ikke rød.

Når den har fått strømmen sin tilbake og fått startet opp så blir den grønn igjen, og alt er vel og bra.

Så, kort om gondul. Det som er litt kult er at gondul er hjemmelaget til TG. Systemet er laget veldig modulært (“oppstykket”), så det går fint an å bruke det til andre datapartyer enn TG, men det brukes i hvert fall aktivt på TG.

Ikke nok med det! For at andre skal kunne bruke gondul så må de jo få tak i det på noe vis. Og gondul har løst dette ved å være helt åpen kildekode.

Alt av gondul finner dere her. Og hvis man trykker på “Contributors” så får man opp en liste av alle som har gjort noe med gondul. Kult, hæ?

Så hvis noen bruker gondul så kan de rapportere inn feil eller foreslå features rett på den nettsiden. Det er litt kult.

Jeg jobber til daglig som systemutvikler, og jeg syns åpen kildekode er kjempegøy. Det er morsomt å se hvordan andre har løst problemer, og det er fint når jeg slipper å løse et problem som noen andre har løst før meg.

Ikke minst er det gøy å bidra til det.

Blant annet så har jeg (selvskryt ahead) løst en bug jeg fant i et program og rettet et par skrivefeil i andre. Det er bare småting, men det er veldig gøy at kode som jeg selv har skrevet blir brukt av andre verden over.

De siste par årene så har åpen kildekode tatt av med storm, og store aktører som Microsoft, Google og Facebook har alle programvare ute som er åpen kildekode. Alt fra teksteditorer til programmeringsspråk og programmeringsbiblioteker.

Under TG i fjor så fant jeg ut om gondul og at det var åpen kildekode, og jeg fant en feil som gjorde at gondul noen ganger viste en ugyldig status på en av verdiene til en switch.

Ettersom all kildekoden lå åpent ute på internett så fant jeg ut hva som skapte feilen, klarte å gjenskape den, og skrev litt kode som gjorde at denne feilen ikke vil skje igjen.

Så sendte jeg inn et forslag til endring, og dette ble akseptert og dratt med inn i gondul.

Så nå har jeg faktisk bidratt bittelitt til gondul!

Og i år så har jeg fortsatt med å bidra til gondul litt fra sidelinjen, ved å utforske ting man kan gjøre med gondul uten å direkte påvirke gondul i seg selv.

Blant forslagene er å få NOC-en til å blinke med lysene dersom noe på værkartet er i “feil” tilstand, sende en melding til chatten til tech og så videre.

“Hva skal vi blogge om?”

“Lag en skikkelig noob forklaring om hvordan nettverket er satt opp på tg. Slik at selv mennesker som meg forstår :D”

CHALLENGE ACCEPTED!

Håper du er glad i lange blog-poster!

Kant og gulv

Så internett på TG, hvordan funker det egentlig? Så en “noob” forstår…. Hvor skal man begynne? På internett? Nei, begynner på andre enden jeg! På PC’en din!

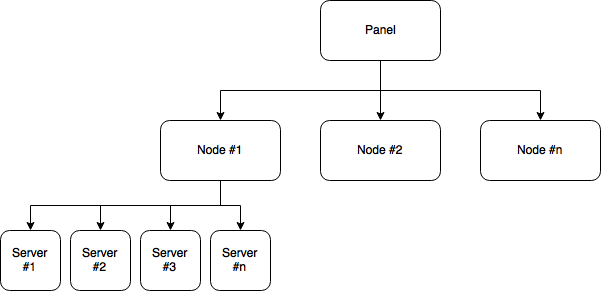

Når du kommer på TG har du med deg en pc, finner plassen din og drar frem kabelen din. Og kobler den til. Du kobler da til det vi kaller en “kant-svitsj” eller “aksess svitsj”. Det er det vi kaller det utstyret som er helt på kanten av nettverket, mot brukerene våre. Alle deltakere som kobler seg på samme kantsvitsj befinner seg på samme lokalnettverk – samme LAN, faktisk. Om du vet hva en IP adresse er så betyr det at du og de du deler svitsjen på har ip-adresser som er i samme “range” – eller ved sidenav hverandre om du vil, i samme serie. Vi deler opp så hver kantsvitsj kan ha opp i mot 62 unike IP’er.

På samme lokalnettverk kan alle pc’er snakke rett med hverandre på det som kalles “lag 2” (lag 1 er det elektriske laget – lag 2 er “lan”-laget om du vil, lag 3 er “internett”).

Med direkte så mener jeg at det ikke er noe filtrering og du kan snakke uten at “vi” styrer stort med det. Det er slik LAN fungerte i gamledager. Vi kan i prinsippet bare koble alle våre svitsjer sammen slik i en stjerne. På mindre dataparties så er det ofte det man gjør: Man har en svitsj i “midten” og 3-5 andre koblet på den. Rent fysisk gjør vi det på TG også, men når du har 120 kantsvitsjer (120 deltakersvitsjer, om du vil) så får man et problem med støy!

Støy? Tenk deg at du befinner deg i et rom med 5 folk, det går fint å snakke med alle da, og det går fint å snakke en og en. I et rom med 40 går det også greit. Det går faktisk fint i et 17 mai-tog med flere tusen folk også. Inntill noen begynner å rope “SKAL VI SPILLE DUKE NUKEM 3D?” så høyt at alle hører det. Med 5 folk er det ikke et stort problem – du overlever, med 40-50 går det ok-ish. Men om et helt søttendemaitog roper, blir det bråkete. Og forskjellen på et rom og et LAN er at i et rom så avtar jo lyden med avstand, så du blir ikke forstyrret om noen står lenger bort, men i et LAN så gjør det ikke det. Heldigvis er det sånn at om du snakker kun til en pc så er det bare den som får signalet, men det er masse ting en PC gjør som går til “alle” – alt fra å finne LAN-spill til å lete etter printere til å spre virus…. Jo flere som deler samme LAN, jo mer støy.

Derfor har vi delt inn TG slik at hver kantsvitsj har sitt eget nettverk (andre ord på det samme: ett subnet, noen vil si “vlan”, men det er litt missvisende).

I vårt eksempel blir det som om hver kantsvitsj er et eget rom.

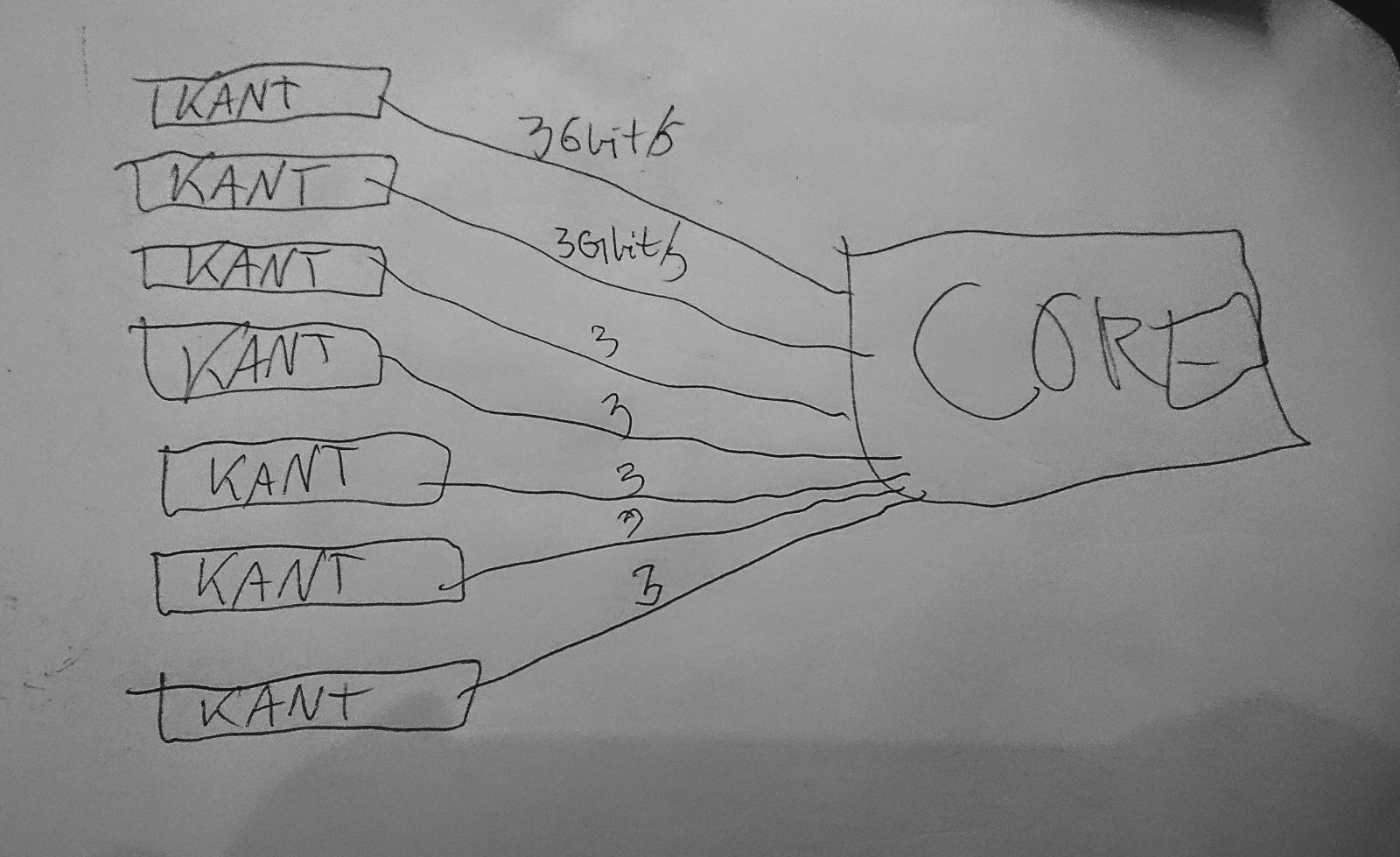

Hver kantsvitsj er koblet med 3 “uplink”-kabler opp til det vi kaller en distribusjonssvitsj (vi pleier å kalle det en distro på TG). Vi kaller det uplink når man går fra en mindre dings til en åpenbart større/mer sentral… veldig vitenskaplig ….

Distro

Distroene er de svitsjene dere kan se i midtgangen. Se på det som at du befinner deg i et rom, og om du går ut i gangen når du en distro-svitsj.

For å sørge for at de 40 folka du deler kantsvisjen med kan få litt bedre hastighet – og for at konsekvensen ved feil er mindre – så har vi trukket ikke en, men TRE kabler fra kantsvitsjene til distroene. Disse er satt opp så det for svitsjene opptrer som én enkel kabel – men med tre ganger så høy hastighet. Om noen tar ut en av de tre vil vi fortsatt ha 2Gbit/s igjen å gå på.

En annen litt kompliserende faktor er at hver av distro-svitsjene våre består av 3 fysiske svitsjer som er helt like. Men disse tre svitsjene er satt opp til å snakke sammen med en intern protokoll og oppfører seg NESTEN som om de var en og samme svitsj. Så for alle praktiske formål kan du tenke på det som en enkelt svitsj, selv om det fysisk er tre av dem.

Hver av distro-svitsjene våre er koblet på cirka 10-15 kantsvitsjer. Kanskje 500 deltakere? I tillegg kobler vi de trådløse akksesspunktene på disse også (vi holder trådløs litt utenfor).

I gamledager (fram til og med TG17 – skikkelig superlenge siden) brukte vi disse distrosvitsjene som “rutere”, det gjør vi ikke dag. En ruter er en nettverksting som skjønner adressering av internett-pakker – et lite logisk nivå over “alle i et rom”-nivået. Fordi våre distrosvitsjer har vist seg å være litt svake så har vi slutta å rute på dem – før kunne trafikk som skulle fra en kantsvitsj til en annen kantsvitsj på samme distro rutes “direkte”: fra kantsvitsj A til distro til kantsvitsj B. Det kan de ikke lenger. I stedet sender vi all trafikk som går UT fra en kantsvitsj TIL en distro opp til …. core!

CORE

Så vi har kantsvitsj->distro-> core?

Så distro-svitsjene våre er altså koblet til noe vi kaller “core”. Dette er gjort ved at vi har trukket 2 fiberkabler fra taket i vikingskipet ned til hver av distrosvitsjene. Disse fiberkablene kan hver overføre 10Gbit/s. Og akkurat som vi gjorde med de tre kablene fra kant til distro så har vi satt det opp så det fremstår som én logisk kabel.

I “gamledager” hadde vi en faktisk ruter i taket. Det har vi ikke lenger, fordi fiberkabler har en egenskap som din TP-kabel ikke har: Du kan trekke fiberkabler LANGT! En TP-kabel har en maks-lengde på 100 meter. En fiber-kabel slik vi bruker har flere ti-talls kilometer. Da slipper vi slikt som vi hadde under TG17:

Så når de 2 fiberkablene fra en distro kommer opp i taket så kobles de inn i et “patcheskap”. Dette er rett og slett bare et dumt koblingsskap. I andre endren av skapet er det en kabel med 24 fiberkabler, denne kabelen går langs med en “bro” i taket og ned til “NOC”en som dere ser på tribunen – der Tech:Net sitter med den fine grønne lampa. Denne fiberkabelen har vi lagt permanent i vikingskipet.

Siden vi har 9 distro-svitsjer, hver av dem bruker 2 fiberkabler, så bruker vi “bare” 18 av 24 fiberkabler nå, og har 6 vi kan bruke til annet.

Nede ved NOC’en står det en core-ruter, dette er en kraftigere ting, den vi lånte for TG18 og sikter på for TG19 kan veie opp mot 86kg så det er fint å

slippe å bære den helt opp i taket.

Core ser cirka slik ut:

(Her har vi altså vår tekniker på saken! Den store er core, den lille til høyre er ring-node – vi kommer til det!)

Alle distro-svitsjene blir altså koblet inn på core, og om du på din kantsvitsj skal snakke med naboen din som sitter på en annen kantsvitsj – eller noen i helt andre enden av skipet – så går trafikken “opp til” denne ruteren.

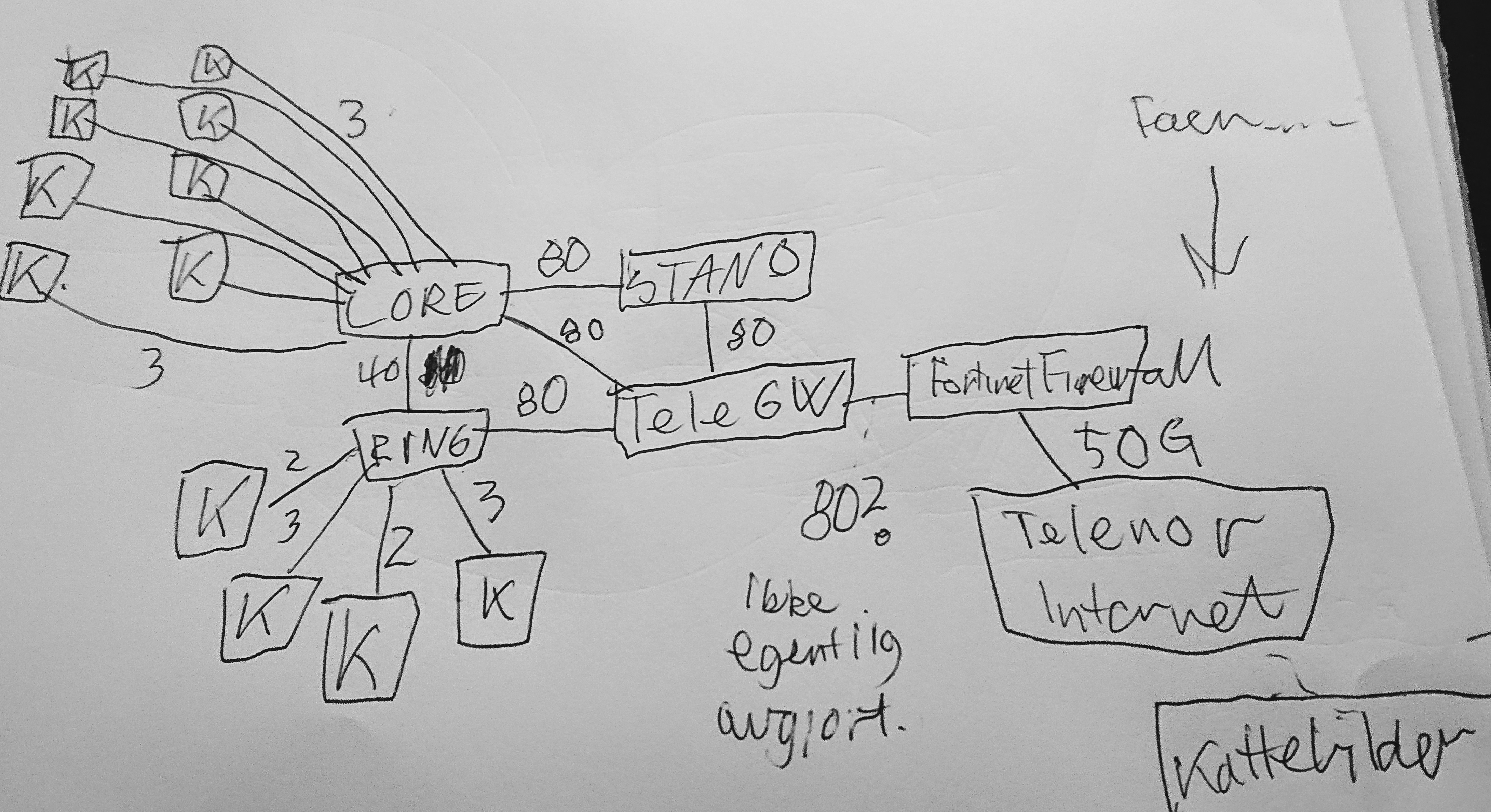

For å tegne dette med høyteknologiske hjelpemidler….

Dette er egentlig et veldig tradisjonelt stjerne-nettverk, uten veldig mye eksotiske ting ved seg.

Fra “IP-perspektiv” ser det litt annerledes ut – som jeg forvirret dere med tildigere så gjør vi ikke ruting på distro-svitsjene våre, så de er en slags “kabel-samlere” bare. Så rent logisk ser det mer slik ut:

Ganske rett fram!!!

Ringen

Men så blir det litt klønete da. Vi skal jo også ut på INTERNETT! Og det er masse små rare ting rundt om i skipet som IKKE er koblet via “core”. Det vi har snakket om foreløpig er kun det vi kaller “gulvet” – alle de “vanlige” kantsvitsjene.

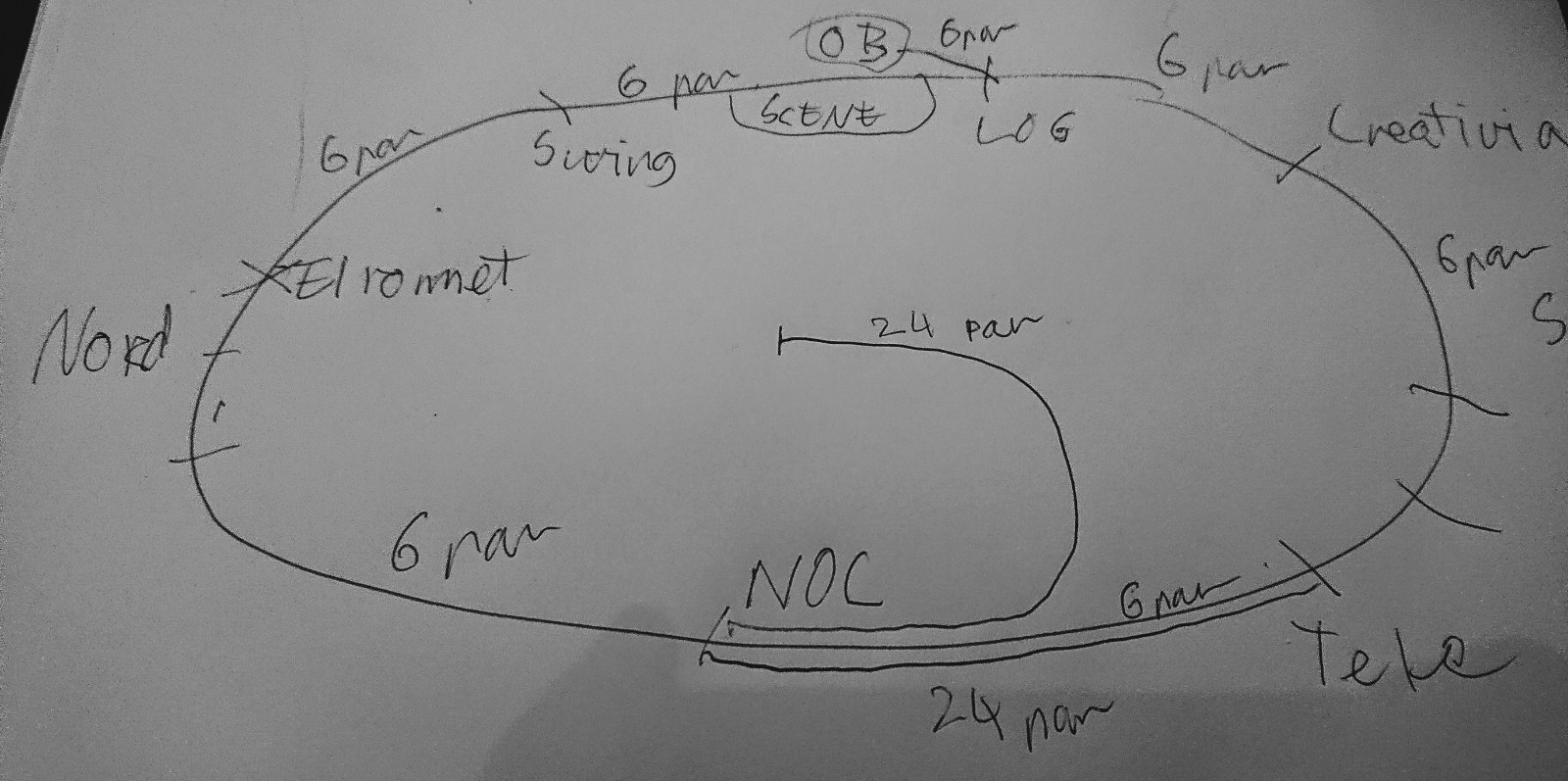

I vikingskipet er det masse steder vi trenger nettverk uten at det er lett å koble på via “gulvet”. For å løse dette har Tech-crewet lagt en permanent fiber-infrastruktur i vikingskipet, som vi kaller “ringen”. Det er rett og slett 6 fiberkabler som sammen danner en ring rundt hele vikingskipet. Hver av “nodene” i ringen befinner seg henholdsvis:.

I “NOC”en – altså på tribunen i vest der også core står. Denne brukes til Tech:Net-crewet, til alle crewene som sitter på tribunen, sponsor-rommene, ned til resepsjonen (aner ikke helt hvor DET strekket går! Men noen vet!) og masse annet – dette er den mest brukte noden. Dere kan skimte ring-noden ved noc’en på bildet av core over.

Videre nordover går det til “elrommet” et rom veldig få folk er inne i – I praksis bare noen få i Tech:Net og HOA (de som eier vikingskipet) – det er her all strømmen i skipet føres inn. Dette er under auditoriet i nordenden. Denne noden brukes blant annet for å dra kabler til auditoriet og ut til enkelte sponsorer og annet som er helt i nord.

Østover er neste stopp i “swing” – et lager i nord østre sving. Dette er bak scenen og til venstre, og brukes – ikke overraskende – til blant annet scenen og alt som skjer der (av og til har vi droppet en ekstra fiber fra taket også, men ikke i det siste tror jeg)..

Neste stopp med klokka er “log” – dette er lageret til bak og til høyre for scenen, der blant annet logistikk-crewet sitter. Og brukes igjen nettopp til logistikk og tilsvarende “ymse”.

Videre igjen går vi ned til “south” – lageret i sør øst hvor “creative” har pleid å holde til. Og igjen, brukes til blant annet creative, trådløspunkter, og IKKE MINST, kameraet vi har helt i sørenden av skipet!

Neste stopp er telematikkrommet – dette er det desidert mest kritiske rommet for Tech:Net – det er et bittelite “teknisk rom” i noen ganger i sør vest – ikke langt unna den inngangen dere går inn i ved hovedinnslippet..

Her går det videre igjen til NOC’en, og ringen er sluttet. Hvert strekk i ringen har 6 fiberpar, så vi har litt å gå på siden vi normalt bare bruker ett par til den “logiske” ringen. I tillegg til dette går det en ekstra kabel fra NOC’en og ned til tele-rommet, så vi har 6 + 24 par dit. Vi har også en siste kabel vi faktisk ikke bruker som går fra logistikk og opp til gangene i etasjen over bak scenen. Denne har vi av og til brukt når det stod en buss med produksjonsutstyr bak der.

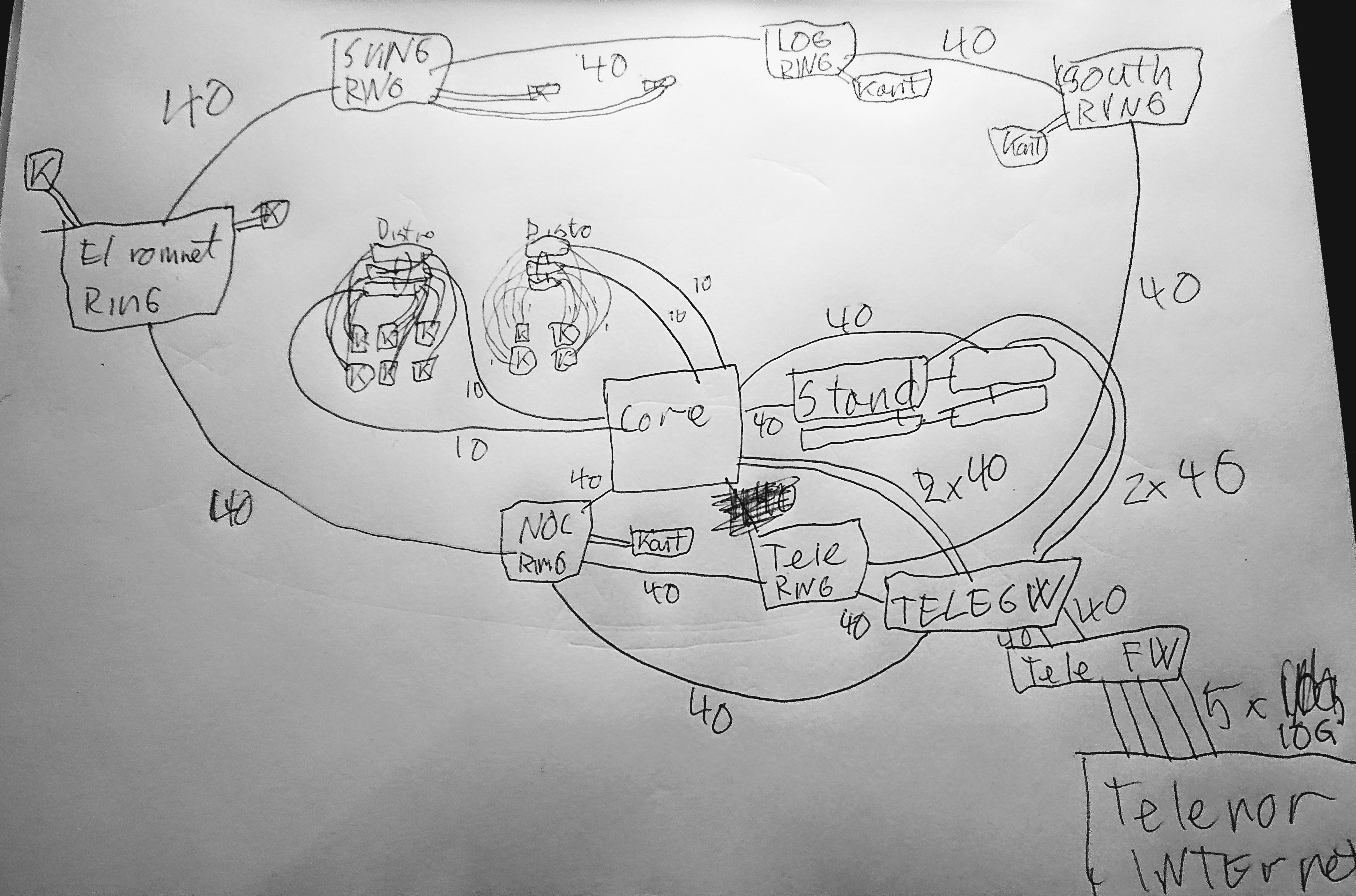

Her har jeg forøkt å tegne kun de fiberkablene som ligger permanent i skipet – inkludert de 24 parene som går opp i taket.

Så for at resepsjonen skal kunne koble seg på så drar vi typisk to TP-kabler helt fra resepsjonen og opp til NOC’en – i diverse kabelgater og ganger (ikke spør meg hvor de går – dette er her Tech:Support stepper inn!).

På hver av ring-“nodene” har vi en svitsj som er hakket kvassere enn distro-svitsjene våre (Distroene er EX3300 og ringen består av 6 EX4300 eller EX4600 – det er jo større tall så det må være bedre!).

For å spare oss selv litt jobb så har vi satt opp ringen slik at alle 6 nodene fremstår som en logisk svitsj – nesten nøyaktig det samme vi gjør med distroene våre. Så selv om det er fysisk 6 svitsjer så snakker de sammen og later som om de bare er en svitsj.

De er også koblet sammen med 40Gbit/s fiber – i hver retning. Siden det er en faktisk ring betyr det at om vi “mister” en kabel (f.eks. noen kobler ut noe eller vi mister strøm) så vil fortsatt trafikken flyte den andre retningen.

Ringen er det første vi setter opp, så og si. Dette fordi vi faktisk trenger den for å komme på nett selv!

En liten forskjell på ringen og en distro er at siden ringen består av bedre svitsjer så klarer vi å gjøre ruting på den – om de i resepsjonen skal snakke med en pc på scenen går det kun på ringen, ikke via noe core-ruter.

Tele

Ok, men hvordan henger gulvet og ringen og internett sammen?

Da må vi snakke om tele-rommet!

På telerommet er det tre ting vi har som er viktig:

- En “ring”-node.

- En “tele-gw” – ruter for internett og lokalt. Det var vel 4 svitsjer i en logisk enhet på TG18?

- En “tele-fw” – en firewall (brannmur).

Jeg holder brannmuren litt utenfor jeg.

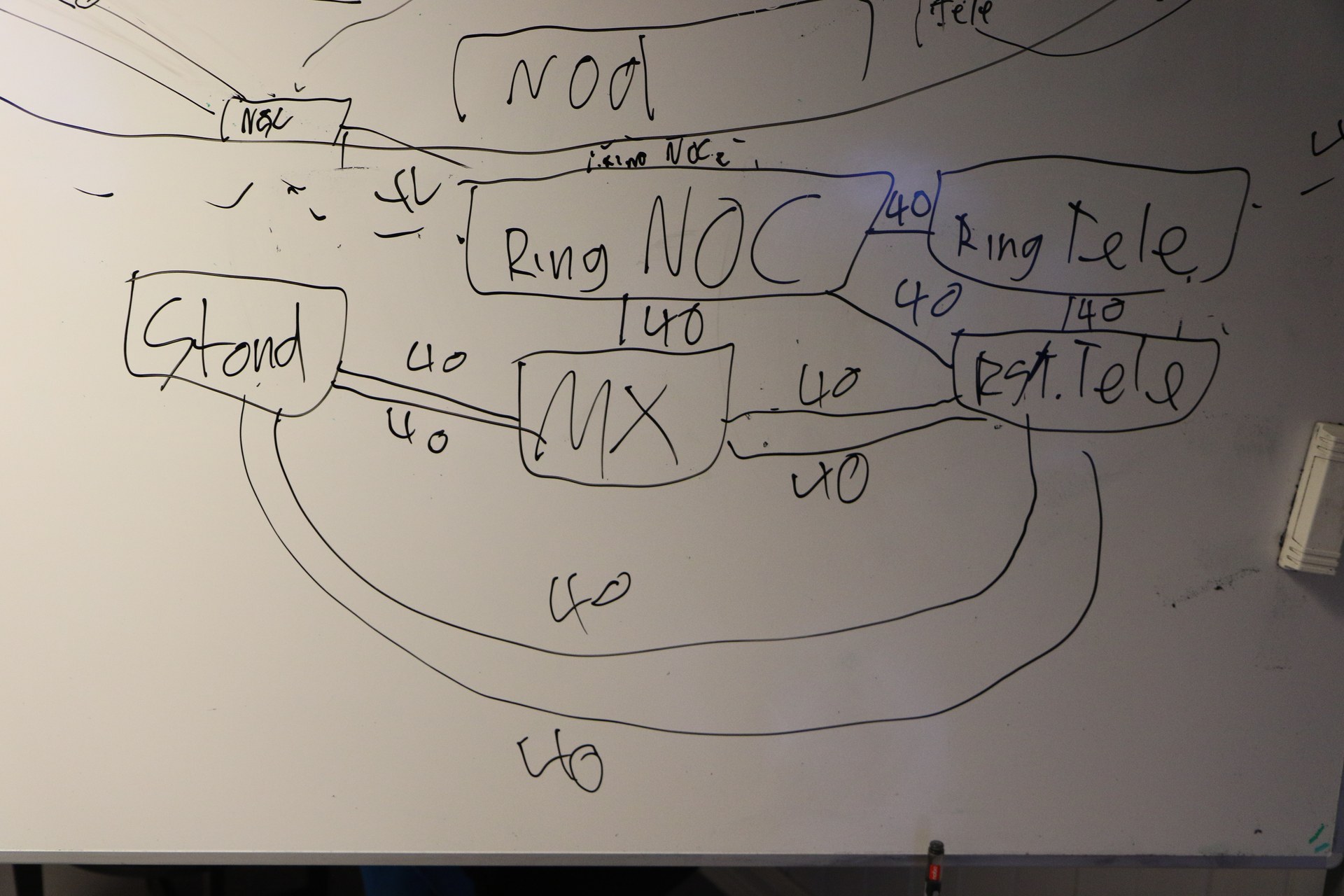

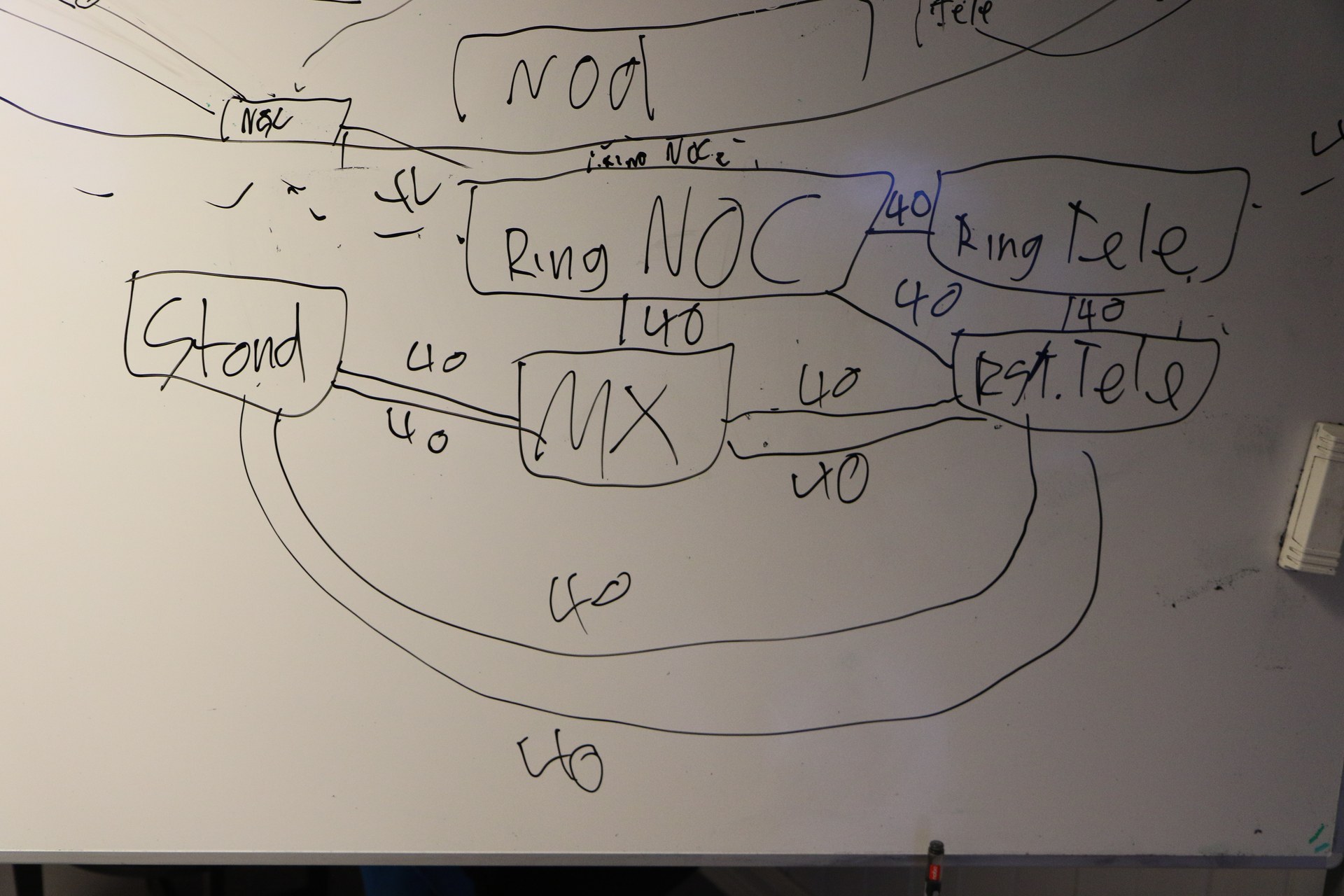

Så vi har snakket om ringen. Det bør være lett å gjette nå, men den “ring-noden” som står på tele-rommet er naturligvis koblet på “tele-gw”-ruteren. Dette er 40Gbit/s.

Det som ikke er like åpenbart er at ring-noden som står ved NOC’en er også koblet med 40Gbit/s til “tele-gw”. Via samme fysiske kabel som linken som kobler de to ring-nodene på NOC og tele, men et annet par….

Dette er både forvirrende og kanskje vanskelig å forstå hvorfor. Men tenk dere selv hva som skjer om noe skjer med ring-noden som står på tele: Vi snakket jo om at ringen går i ring og dermed vil trafikken fortsatt flyte om vi mister en node, men det hjelper jo ikke hvis den noden var den som hadde eneste forbindelse mot internett! Derfor denne litt invikla trekanten. Logisk betyr det at ringen har 80Gbit/s mot “tele-gw”. Fysisk er det 40Gbit/s fra “ring noc” og 40Gbit/s fra “ring tele”…

Tele-gw igjen er koblet mot internett med 5 stykk 10Gbit/s-linker. Via en brannmur (her har vi brukt litt forskjellige løsninger, men for å ikke forvirre ALT for mye så holder vi det med det).

Core – altså der all trafikken fra “gulvet” kommer – er koblet til tele-gw via to 40Gbit/s linker også.

I tillegg har vi en “stand” med servere som dere kanskje har sett. Denne er igjen koblet både med to 40Gbit/s-linker til core-ruteren, og to

40Gbit/s-linker til “tele gw”….

Om dette er forvirrende for deg er det ikke så rart – jeg var ikke helt klar over detaljene her selv før i helga – men vi tegnet denne flotte tegningen på tavla (blås i de øverste bitene – det er del av en annen tegning).

Her er “MX” kort for “MX480” – core-ruteren. Og “rs tele” er det samme som “tele gw” – navn er vanskelig.

Så la meg prøve på to tegninger til – en med fysisk kabling og en logisk ruting!

Først det fysiske kaoset:

Så det logiske:

….

Som du kanskje ser så er det logiske nettverket på TG ikke ekstremt komplisert egentlig. Men som krussedullene mine kanskje hinter om – dette er ikke noe jeg heller har 100% oversikt over hele tiden – det krever en del tenking. Og jeg måtte dobbeltsjekke litt med resten av Tech:Net mens jeg rundet av denne blog-posten….. Vi har prøvd å forenkle det der vi kan, men HELT enkelt er det ikke. Det som kanskje etterlater et par spørsmål er hvordan ruting fungerer når det er flere måter å komme fra A til B, og hvordan det fungerer om vi “mister” en link…

Kort fortalt…. alle ruterene snakker sammen via en protokoll og forteller hverandre om hva de kan nå. Så om en ruter detter ned så vet naboene alt hva som “lå bak” den og hvordan de kan nå alt det andre. Det vil merkes som et lite “blipp” i nettet, men ellers ikke merkbart i praksis.

Jeg vet ikke om jeg lyktes i å gjøre det forstålig for alle, men kanskje litt mer forstålig? Det er masse smådetaljer man kan fylle inn, men dette er det absolutt viktigste av infrastrukturen vår egentlig.

FOTNOTER

Siden sikkert en del som faktisk kan litt nett vil lese og flisespikke/lure:

Jeg skriver konsekvent om fiberkabler og mener da PAR. Så vi har GU48 opp i taket – 24 par, 48 fiber. Valgte å forenkle litt.

Vi terminerer altså vlan hovedsaklig på core og ring, ikke distro. Vi bruker VirtualChassis for ringen, distro-svitsjer og stand.

Det er selvsagt mye flere kantsvitsjer enn jeg har tegnet, og en og annen raritet jeg kanskje har glemt, men det er ikke viktig egentlig.

WiFi har jeg droppa – vi kobler ap’ene på distro eller ring etter behov og slenger de i et AP-nett tror jeg, og de tunellerer uansett trafikken inn til en wifi-kontroller som står i stand. De kjører, meg bekjent, i samme IP-nett.

Vi kjører OSPF internt og BGP ut mot internett.

I fjor hadde vi “firewall on a stick”, det er nok ikke det vi blir å bruke i år. I fjor tok vi altså trafikk fra internett inn på tele-gw, rutet det ut til tele-firewall som firewallet og sendte tilbake på et annet vlan til tele-gw. Lett/logisk, men litt rotete å forklare.

Denne helga samles Tech-crewet for The Gathering 2019 for å begynne siste del av planlegginga for påsken. Dette er en årlig greie, med litt varierende tema og agenda. I år har vi prøvd å få med flere fra Tech:Support, da det er en gjeng drastisk undervurderte folk.

En av tinga vi satser på denne helga er noe jeg ikke har vært med på før: Vi skal snakke oss igjennom hele opprigget, steg for steg. Jeg mistenker det vil gi oss plenty av muligheter til digresjoner, for å si det sånn!

Hvorfor gjøre dette?

Fordi jeg tror ikke det er en eneste person i crewet som faktisk har god oversikt over opprigget vårt i sin helhet, og vi håper å kunne spare mange timer på små endringer.

Det går rett og slett på ting som “Hvorfor bruker vi hele fredagen bare på å få satt opp vår egen arbeidsplass i NOC’en?” og “er det fordi vi gjør ting tregt at det tar så lang tid å sette opp distro-svitsjene, eller er det en annen grunn?”

Jeg skal ikke gå for mye inn i detaljer (Fordi Marius er rett rundt hjørnet for å låne sofaen min), men jeg tror dette blir … spennende.

Andre ting vi ser på denne helga er diverse småting og små demoer. Ansible til å kjøre kommandoer på svitsjer, netbox for ipam, og ganske mye annet.

Om vi rekker kommer vi også til å kjøre igjennom en del standard Juniper-ting, av det nærmest banale. Fordi vet du hva? Det er faktisk bare en eller to i Tech som jobber med Juniper jevnlig nå. Vi er ikke eksperter faktisk. De fleste av oss er med på dette fordi vi lærer sykt mye hvert enese år.

Jeg lover å komme med en oppdatering, men i mellomtiden kan dere også joine oss på discord (vi er blitt unge og hippe nå! Discord i stedet for IRC!!!!) – INVITE LINK TING

Da jeg i sin tid begynte å arrangere LAN for alvor var det 30-50 folk vi starta med. Hvis vi hopper over en gjesteopptreden på TG02 så var første gang BølerLAN i 2003. Jeg hadde akkurat flytta ut av Oslo, og det var aldri arrangert LAN på Bøler før. Selv om vi endte opp uten internett (ingen viste brukernavn/passord og slikt til ISDN-linken som fritidsklubben hadde) så er det et av de beste arrangementene jeg kan huske.

Å arrangere mindre parties var utrolig gøy, men å faktisk få tak i utstyr var ikke lett. Å finne ut hvordan man får fiksa internett på en pålitelig måte tok noen år – ikke fordi vi ikke klarte å sette det opp, men fordi vi måtte lære oss hvordan vi skulle snakke med ISP’er og sånt på et språk som nådde fram.

På TG er det helt motsatt. Vi har, sammenlignet med BølerLAN, en helt enorm skala. Vi har et par hundre svitsjer stående på et lager (!!! ikke i boden min, men et faktisk LAGER!), vi har eget crew som kan hjelpe med avtaler med sponsorer. Og vi HAR sponsorer! Vi har faktisk fiberkabler (!), vi kjører ikke DHCP-serveren fra privat-pc’en min. Vi har et eget crew som har ansvar for å frakte ting dit det skal når det skal være der. Vi har en helt annen hverdag enn stort sett alle andre dataparties/LAN i Norge.

Men hei, her er greia. Vi har også “jira” (prosjektstyringsverktøy), planlegging for TG19 som tar nærmere ett år. Egne tasks som går kun ut på å finne ut hvilke tasks vi faktisk har innen et gitt område. Vi får (muligens rettmessig) kjeft av folk som bruker begreper som “HMS” når vi gjør litt trygghetsmessig tvilsomme ting på toppen av høye ting (ahem, dette skal vi altså ikke gjøre). Vi må forholde oss til tollsedler. Mengden ting vi må TELLE før og etter hvert arrangement er i seg selv verdt å telle opp. Vi må koordinere når vi ruller ut svitsjer under opprigg så det ikke kolliderer med planene til de som rigger scena, de som rigger strøm eller de som skal henge opp et eller annet i taket. Det er i det hele tatt en helt annen greie å arrangere TG.

Men i helga skal i hvert fall jeg litt tilbake til røttene. I helga går spillexpo av stabelen på Lillestrøm, og underveis arrangeres “The Gathering: Origins” (https://origins.gathering.org/). Dette er det jeg vil kalle et “vanlig” LAN. Om du ikke har noe å bestille i helga så ta turen innom (Ser du dette Dawn? Får jeg pluss i margen nå for å reklamere litt?)! Størrelsen er vel kanskje 70 folk?

På teknisk har jeg med meg et par andre tg-folk. Vi skulle gjerne hatt ikke-tg-folk, men for meg var dette noe jeg bare gjør fordi noen spurte meg. Etter 16 år med dataparties med alt fra 30-40 deltakere til 5500+ så er det å gå “tilbake” til noe slikt ganske gøy.

At vi kan låne TG sitt utstyr fra KANDU hjelper selvsagt. Men så viste det seg at vi har jo også et ganske solid kontaktnett. Så når vi fant ut at vi kanskje trengte en firewall fordi vi (fortsatt) er litt usikre på om vi må kjøre NAT eller ikke, så spurte jeg litt rundt. Da viser det seg selvsagt at Harald (I Tech:Net og fra Fortinet), fantastisk som han er, har en gigantisk Fortigate-brannmur liggende hjemme – den vi etter planen skal bruke på TG19. “Og forresten, Kristian, så har jeg no fortinetsvitsjer også som fortigaten kan styre så slipper du konfe svitsjer?”. Ja ok, så vi har et lager fullt av svitsjer til TG, men det viser seg at HARALD har basically hele nettet vi trenger liggende klar til bruk hjemme så vi trenger ikke en gang låne fra TG/KANDU? Hva skjedde med tiden der jeg knappast klarte å oppdrive TP-kabler nok til et LAN!?

Men så prater jeg litt piss med Lasse da, og vi kommer – som vi ofte gjør – inn på dette med LAN. Og vi mimrer om gamledager og sånt. Jeg tenker at det er ganske gøy med slike små parties. Og han nevner noe for meg som jeg kanskje visste, men ikke har tenkt på: Det er parties som sliter med å skaffe tech crew der ute.

Folkens, dette må vi jo fikse!

Det er folk i detta landet som elsker å arrangere LAN og kan løse det aller meste. Det er folk som sitter med utstyr de VIL låne bort til LAN, fordi da kan de teste det litt. Og du har folk som ikke får tak i tech-crew til LAN?

Dette er jo for dumt.

Så, jeg vet ikke hvordan vi løser dette, men jeg kan jo bidra litt. Vi prøvde oss på tech-meetup SLACK, men det døde ut rimelig fort. Jeg orker ikke komme med et forslag på bedre kommunikasjonsplattform nå (Da er vi litt over i oppgaver som sorterer i “Jira”-paragrafen over i stedet for den morsomme paragrafen), så jeg foreslår heller:

Sliter du med å få tak i tech-crew til et LAN et eller annet sted? Sliter du med utstyr? Internett? whatever teknisk? Ta kontakt med oss/meg så ser vi om vi finner ut noe. Enten bare skriv en kommentar her, eller mail meg på kly@kly.no (eller ping meg på EFNet, jeg er kly, men svartid varierer veldig). Ikke noe høytidelig eller noe. Om e-post-boksen min eksploderer så går det bra.

Om noen andre har gidd nok til å sette opp noe for å løse og ønsker å spre det glade budskap så tar jeg gjerne å skriver om det her altså. Og/eller kommer med innspill. Personlig er jeg ingen facebook-bruker, så akkurat der melder jeg meg ut personlig (men jeg er en gammal gubbe i LAN-målestokk, så ikke la meg diktere løsning).

Om du er interessert i å hjelpe udefinerte LAN parties hvis det passer så kan du jo slenge deg på under i kommentarfeltet også?

Point being: Det er hjelp å få, om man spør! Vi suger bare på selv-organisering på tvers av LAN!

God dag!

Fekk tilbod om å skrive om Game og kva teknisk utstyr og programvare me brukar, takkar sjølvsagt ja!

Server Hardware:

I år har vår kjekke snille sponsor Nextron ordna fysiske serverar til Game, kontra tidlegare har me hatt virtuelle maskinar til å køyre programvare. Det blir totalt 5 serverar der 2 er til CS og 3 er til Minecraft, der ein av dei er ein reserve i tilfelle dei to andre skulle slite med noko. Me kjørar SSD’ane i software RAID1 for pålitelegheit, og har tilgang til serverane via IPMI. Spesifikasjonane er som følgjande:

Game – CS:GO #1

20 core Intel Xeon E5-2640v4 2.40GHz

64 GB

2×1 TB SSD

2×10 GbE SFP+

Game – CS:GO #2

20 core Intel Xeon E5-2640v4 2.40GHz

64 GB

2×1 TB SSD

2×10 GbE SFP+

Game – Minecraft #1

Intel Core i7-7820X 3.60GHz, turbo 4.3GHz/4.5GHz

64 GB

2×1 TB SSD

2×10 GbE SFP+

Game – Minecraft #2

Intel Core i7-7820X 3.60GHz, turbo 4.3GHz/4.5GHz

64 GB

2×1 TB SSD

2×10 GbE SFP+

Game – Minecraft ekstra

Intel Core i7-7820X 3.60GHz, turbo 4.3GHz/4.5GHz

64 GB

2×1 TB SSD

2×10 GbE SFP+

I tillegg har me to mindre VM’ar på 4GB RAM, 50GB HDD og 2vCPU kjerne som skal køyre kontrollpanelet til serverane, det snakkar me om vidare

*

Server software:

*

I år skal båe Minecraft og CS køyrast med pterodactyl, noko som er nytt for CS på TG. Med to like system vil eventuell feilsøking av pterodactyl truleg gå betre, då me har fleire i crew med kompetanse for pterodactyl. Om det ikkje går som planlagt, har me og ein fallback løysing CS systemet som kjørte forrige TG. For dei som er ukjent med det, er pterodactyl ikkje eit legemiddel (som eg først trudde) eller ein dinosaur (som Kristian trudde), men eit management panel til spel-serverar. Programvaren har open kjeldekode og tilbyr ein haug med funksjonar frå kontrollering av fleire nodar, API kontroll, rask distribuering av serverar og mykje meir. Det er docker basert, så ein kan skrive eigen docker bilete ein kan distribuere, som me gjer. Meir info hos https://pterodactyl.io/

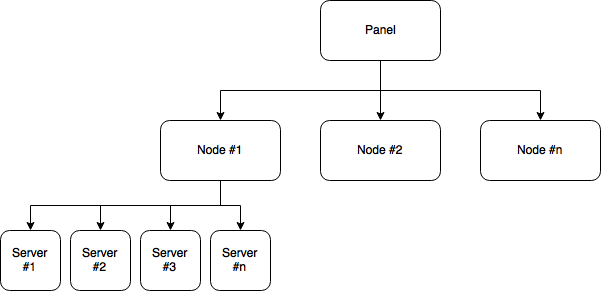

CS og Minecraft køyrar uavhengig frå kvarandre med eiget panel på kvar sin virtuelle maskin. Det er fullt mogleg å køyre båe Minecraft og CS serverar via eit panel, dog. Det panelet er då kopla til dei fysiske serverane våre som node, der me installera OS, docker, daemon, og lastar opp konfigurasjonsfila for å kople oss på. På nodane kan me då få opp docker bileta som køyrar sjølve spel-serverane. Oppsettet kan likne noko sånt:

Me brukar da 32 serverar til CS fordelt på to nodar, og har ressursar til meir om det skulle meldast på fleire lag. Samstundes kan me køyre opp til 128(!) Minecraft serverar.

CS maskinane kjem til å kjøre GO:TV Relay sånn deltakarar kan sjå stream direkte frå ein spelar om vedkommande ynskar, framfor gjennom Twitch o.l.

Eit anna system me brukar er Unicorn (Unified Net-based Interface for Competition Organization, Rules and News, phew). Unicorn er konkurransesystemet til TG laga for å drifte konkurransane på The Gathering frå ein deltakar ynskjer å delta, fram til han står på scena med premien sin. Det blei først brukt i 2015 og er stadig oppdatert med nye funksjonar og optimalisering.

Backend til Unicorn er skrevet i PHP med ein MySQL database server. Frontend er enn so lenge tett kopla saman med backenden, og brukar ein MVC modell med Smarty templating. Planen på sikt er å skilje front og backend og heller gå over til ein React (eller liknandes) basert frontend. Til fillagring nyttar me oss av tus.io for opplasting av store filar. Dette gir oss moglegheita til å gjer kontinuerlege opplastingar om ein opplasting skulle feile. Deretter flyttast filar over i AWS S3 som blir brukt som eit CDN gjennom AWS CloudFront.

Creative sine konkurransar handterast direkte gjøna UNICORN, der administratorar kan administrere alle aspekt av sine konkurransar. Dette inneberar alt i frå oppretting av konkurranse, mottak av bidrag med kvalifisering & diskvalifisering, resultatavlesning osv. For game har me i tillegg implementert støtte for Toornament som handtera brackets.

Bruk av API:

Til Minecraft brukar me Unicorn. Med Minecraft sendar me queries mot Unicorn sitt API som hentar ut påmeldte lag. Om den finn eit lag, sendar den requests mot pterodactyl og opprettar server. Etter den er oppe og går, sendar systemet kommandoar for oppsett av serveren. CS brukar eBot til administrering, start/stopp av match samt som dei samlar demo og loggar som i atterkant kan bli brukt til å lage fine grafar og statistikk

Eg håpar denne gjeste-artikkelen har vår til hjelp for folk som undrar på det tekniske bak game, og for folk som ynskjer å søke hos oss i CnA (Competitions and Activities)!

Spesiell takk til Jo Emil Holen for å dele mykje informasjon angåande Unicorn!

Og stor takk til alle i Game som var med på å fortelje om deira sine tekniske oppgåver og system me brukar!

Jeg vekket akkurat contrib.gathering.org til live, men dette blir en to-delt blog-post.

Hva er contrib.gathering.org?

La oss begynne med det viktigste: contrib.gathering.org er en samling linker til ting som er løst relatert til TG. Jeg håper lista vil eksplodere, etter hvert som alle dere der ute som lager ting får lagt til innholdet deres. Hjelp oss!

Den enkleste måten å gjøre det på er via github. Helst kan du lage en pull request, men et vanlig issue virker selvsagt også.

Vi håper å få samlet så mye artige linker (relatert til TG som mulig). Bildegallerier, konkuransebidrag, eller hva det måtte være. Bruk fantasien!

Del to: Deploy

Den andre delen av denne posten er stacken som ligger bak. Tidligere har alt vi hoster på TG stort sett ligget på den samme enorme serveren, men nylig har vi begynt å bevege oss over i litt mer moderne metoder. Contrib er foreløpig den desidert mest moderne pipelinen vi har. Den vil forhåpentligvis være et positivt bidrag for å få flyttet andre, viktigere websider over til mer moderne og fleksible utviklingsmiljøer.

Selve biten med rst2html og sånt for å generere html er ganske lite interessant.

Det som er artig er at dette kjører på Kubernetes. I dag kjører det på Google Cloud, men det kan selvsagt kjøre andre steder også – vi prøver ut Google Cloud først.

For å deploye til “prod” er alt vi trenger å gjøre i dag å comitte til master-branchen i git, når det skjer så trigges en automatisk bygg i Google Cloud, som avslutter med å gjøre en deploy i kubernetes-clusteret (også i google cloud). Denne deployen gjøres med en rolling update.

I dette tilfellet er det forholdsvis overkill, siden det ikke er noe kode bak – bare statiske filer – men det er en fin test, og vil gjenbrukes for andre prosjekter.

For de interesserte så ligger alt for deployment i samme git-repo. En rask gjennomgang:

~~~~.text

- /Dockerfile – Denne brukes til å bygge en

container, den kan kjøres på din lokale laptop om du vil

- /cloudbuild.yaml – Dette brukes av den automatiske

byggeprosessen. Kort fortalt definerer den at ved

en ny git commit skal det gjennomføres en docker build,

push og kubernetes deploy.

- /k8s/ – Dette er ressursene som er lagt opp i Kubernetes.

De er i utgangspunktet bare lagt til en gang for hånd,

eller ved endringer.

- /k8s/deploy.yaml – dette er selve deploymentfila, som i

praksis bare definerer hvilket image vi skal bruke. Imaget

oppdateres automatisk av byggescriptet.

- /k8s/service.yaml – Her gjør vi deploymenten tilgjengelig for

andre tjenester i clusteret

- /k8s/ingress.yaml – Denne definerer at tjenesten skal

eksponeres på internett, via “ingress”-tjenesten. Den

spesifiserer domenet, og ber om SSL-terminering, via

let’s encrypt.

~~~~

(Jadda, flott med en blog der bullet points er brukket)

Det som ikke ligger i det repoet er resten av infrastrukturen: ingress-kontrolleren og let’s encrypt automatikken. Dette kan det hende det kommer mer om når det faktisk er 100% ferdig. Teknisk sett kjører alt dette på min personlige gcloud-konto/cluster i dag…. Fordi jeg glemte igjen login/passord til KANDU/TG sin konto på jobben (… glemte “pass git push”, for de nysgjerrige).